This little experiment wasn’t meant to encourage cheating—far from it. It actually began as a casual conversation with a colleague about just how “cheatable” online tests can be. Curiosity got the better of me, and one thing led to another.

If you’ve come across my earlier post, “Get an A on Moodle Without Breaking a Sweat!” you already know that exploring the boundaries of these platforms isn’t exactly new territory for me. I’ve been down this road before, always driven by curiosity and a love for tinkering with systems (not to mention learning how they work from the inside out).

This specific tool, the LinkedIn-Skillbot, is a project I played with a few years ago. While the bot is now three years old and might not be functional anymore, I did test it back in the day using a throwaway LinkedIn account. And yes, it worked like a charm. If you’re curious about the original repository, it was hosted here: https://github.com/Ebazhanov/linkedin-skill-assessments-quizzes. (Just a heads-up: the repo has since moved.)

Important Disclaimer: I do not condone cheating, and this tool was never intended for use in real-world scenarios. It was purely an experiment to explore system vulnerabilities and to understand how online assessments can be gamed. Please, don’t use this as an excuse to cut corners in life. There’s no substitute for honest effort and genuine skill development.

Technologies

This project wouldn’t have been possible without the following tools and platforms:

- Python: The backbone of the project. Python’s versatility and extensive library support made it the ideal choice for building the bot. It handled everything from script automation to data parsing. You can learn more about Python at python.org.

- Selenium: Selenium was crucial for automating browser interactions. It allowed the bot to navigate LinkedIn, answer quiz questions, and simulate user actions in a seamless way. If you’re interested in web automation, check out Selenium’s documentation here.

- LinkedIn (kind of): While LinkedIn itself wasn’t a direct tool, its skill assessment feature was the target of this experiment. This project interacted with LinkedIn’s platform via automated scripts to complete the quizzes.

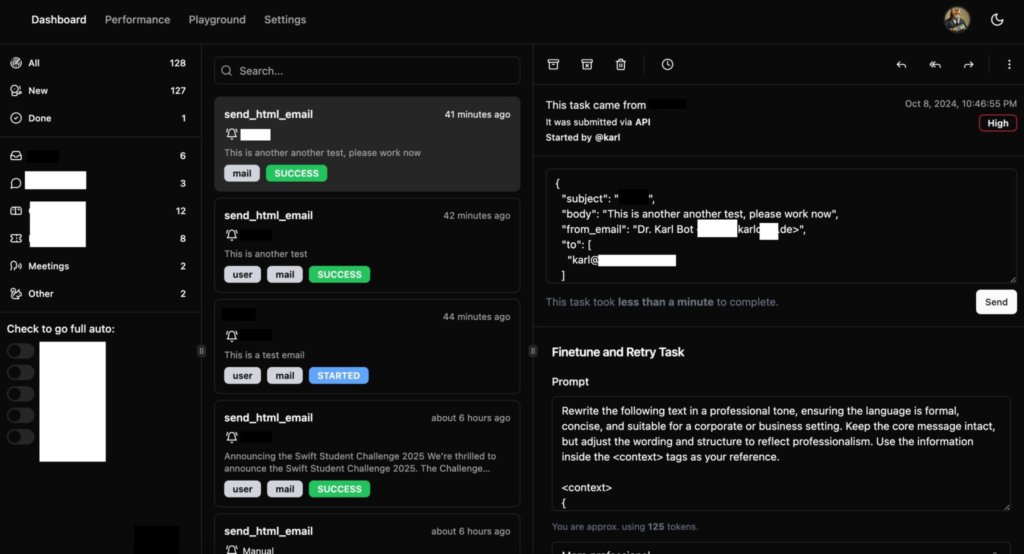

How it works

To get the LinkedIn-Skillbot up and running, I had to tackle a couple of major challenges. First, I needed to parse the Markdown answers from the assessment-quiz repository. Then, I built a web driver (essentially a scraper) that could navigate LinkedIn without getting blocked—which, as you can imagine, was easier said than done.

Testing was a nightmare. LinkedIn’s blocks kicked in frequently, and I had to endure a lot of waiting periods. Plus, the repository’s answers weren’t a perfect match to LinkedIn’s questions. Minor discrepancies like typos or extra spaces were no big deal for a human, but they threw the bot off completely. For example:

"Is earth round?" ≠ "Is earth round ?"That one tiny space could break everything. To overcome this, I implemented a fuzzy matching system using Levenshtein Distance.

Levenshtein Distance measures the number of small edits (insertions, deletions, or substitutions) needed to transform one string into another. Here’s a breakdown:

- Insertions: Adding a letter.

- Deletions: Removing a letter.

- Substitutions: Replacing one letter with another.

For example, to turn “kitten” into “sitting”:

- Replace “k” with “s” → 1 edit.

- Replace “e” with “i” → 1 edit.

- Add “g” → 1 edit.

Total edits: 3. So, the Levenshtein Distance is 3.

Using this technique, I was able to identify the closest match for each question or answer in the repository. This eliminated mismatches entirely and ensured the bot performed accurately.

Here’s the code I used to implement this fuzzy matching system:

import numpy as np

def levenshtein_ratio_and_distance(s, t, ratio_calc = False):

rows = len(s)+1

cols = len(t)+1

distance = np.zeros((rows,cols),dtype = int)

for i in range(1, rows):

for k in range(1,cols):

distance[i][0] = i

distance[0][k] = k

for col in range(1, cols):

for row in range(1, rows):

if s[row-1] == t[col-1]:

cost = 0

else:

if ratio_calc == True:

cost = 2

else:

cost = 1

distance[row][col] = min(distance[row-1][col] + 1,

distance[row][col-1] + 1,

distance[row-1][col-1] + cost)

if ratio_calc == True:

Ratio = ((len(s)+len(t)) - distance[row][col]) / (len(s)+len(t))

return Ratio

else:

return distance[row][col]I also added a failsafe mode that searches for an answer in all documents possible. If it can’t be found, the bot quits the question and lets you answer it manually.

Conclusion

This project was made to show how easy it is to cheat on online tests such as the LinkedIn skill assessments. I am not sure if things have changed in the last 3 years, but back then it was easily possible to finish almost all of them in the top ranks.

I have not pursued the cheating of online exams any further as I found my time to be used better on other projects. However, it did teach me a lot about fuzzy matching of strings and, back then, web scraping as well as getting around bot detection mechanisms. These are skills that have helped me a lot in my cybersecurity career thus far.

Try it out here: https://github.com/StasonJatham/linkedin-skillbot