Hello, I am the laziest.

Lately, I’ve been tumbling down the artificial intelligence rabbit hole. AI hacking, AI coding, AI image and video generation, AI assistants, basically, if you can slap “AI” in front of it, I’ve probably tested it.

If you follow my posts, you know I do a lot of home IT and homelab tinkering. And, like everyone else, I have a massive backlog of chores I’ve been aggressively putting off. My recent list of shame included:

- Proxmox Host Kernel Update (because who likes rebooting?)

- An annoying WordPress error that was blocking my auto-updates.

- Migrating “Karlflix” (my local *arr stack for personal movies that I absolutely have all the rights to) from a clunky VM into a sleek LXC container.

- A deep cleanup of my Discord server.

- A firewall migration to Zone-Based routing (curse you, UniFi!).

…just to name a few.

But here is the plot twist: It turns out that AI coding assistants actually make incredibly good, highly efficient System Administrators, too.

⚠️ WARNING: Use these methods entirely at your own discretion. Always rotate your passwords and keys afterward, and remember that AI hallucinations are real. An AI can and will make mistakes, including irreversible

rm -rf *catastrophic mistakes on your Linux machines. If you accept the risk, let’s dive in.

The Art of the “Lazy Prompt”

Here is exactly how lazy I get. To kick things off, I fired off a prompt that looked something like this:

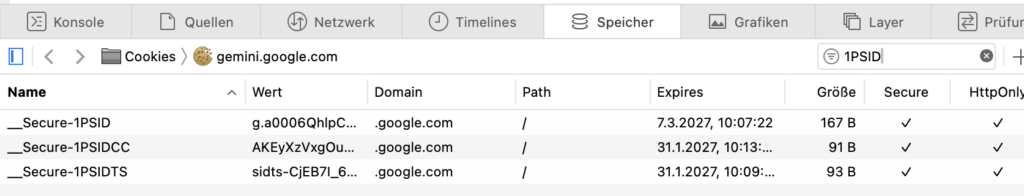

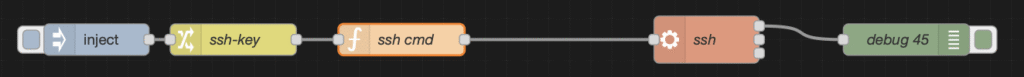

Use the connection string in my .env file to connect to Proxmox via SSH. Analyze the Proxmox error logs and the system for any warnings, issues, or errors. I want you to suggest improvements and fixes.For context, I had a simple connection string sitting in my .env file so the AI knew exactly where to go:

SSH_FULL="ssh -o StrictHostKeyChecking=no -o IdentitiesOnly=yes -i ~/.ssh/proxmox-karl-fail [email protected]"Almost instantly, it discovered a bunch of useful maintenance tasks I’d been ignoring for months and spit out a highly actionable table:

| Severity | Discovered Issue | Suggested Fix |

| 🟠 Warning | libknet1 not correctly installed | Run apt install --reinstall libknet1t64 |

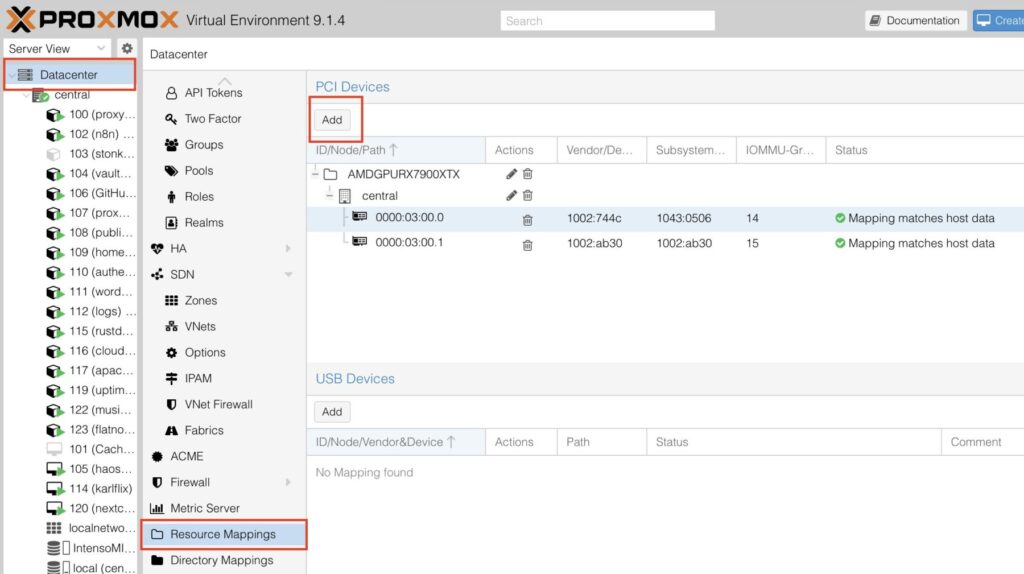

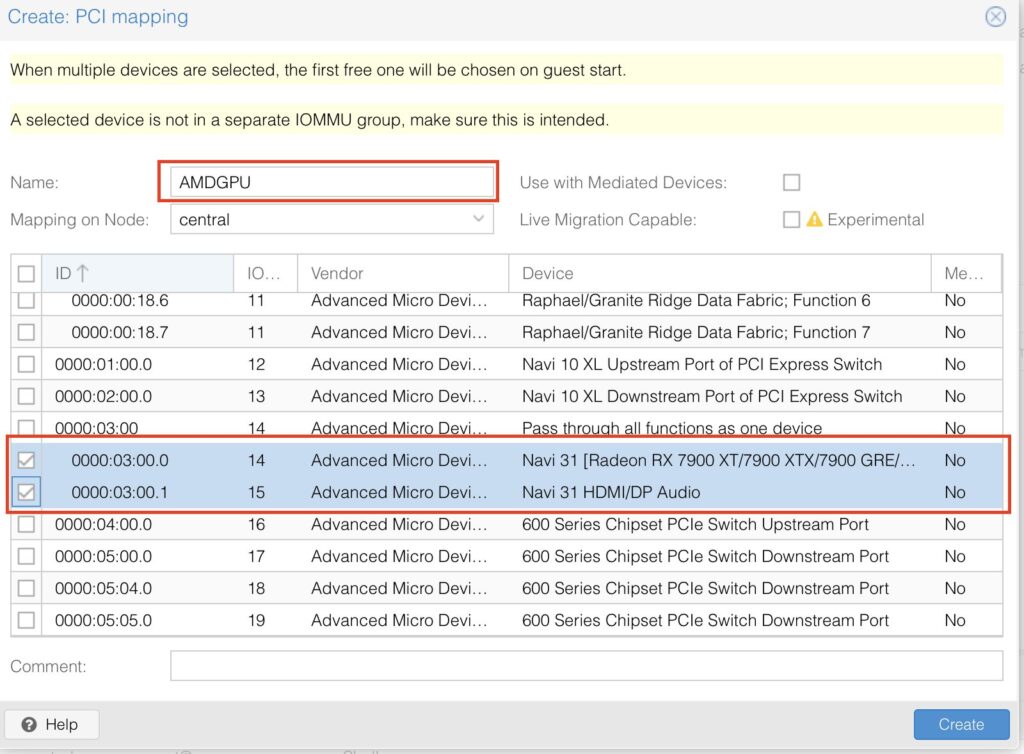

| 🟠 Warning | VM 101 GPU passthrough (audio chip missing) | Add hostpci1: 0000:03:00.1 to VM 101 config |

| 🔵 Info | Docker running directly on Proxmox host | Move Docker to an LXC container |

| 🔵 Info | Beta APT repos enabled | Disable if this is a production environment |

| 🔵 Info | Reboot required for kernel 6.17.13 | Reboot whenever convenient |

Letting AI Take the Wheel

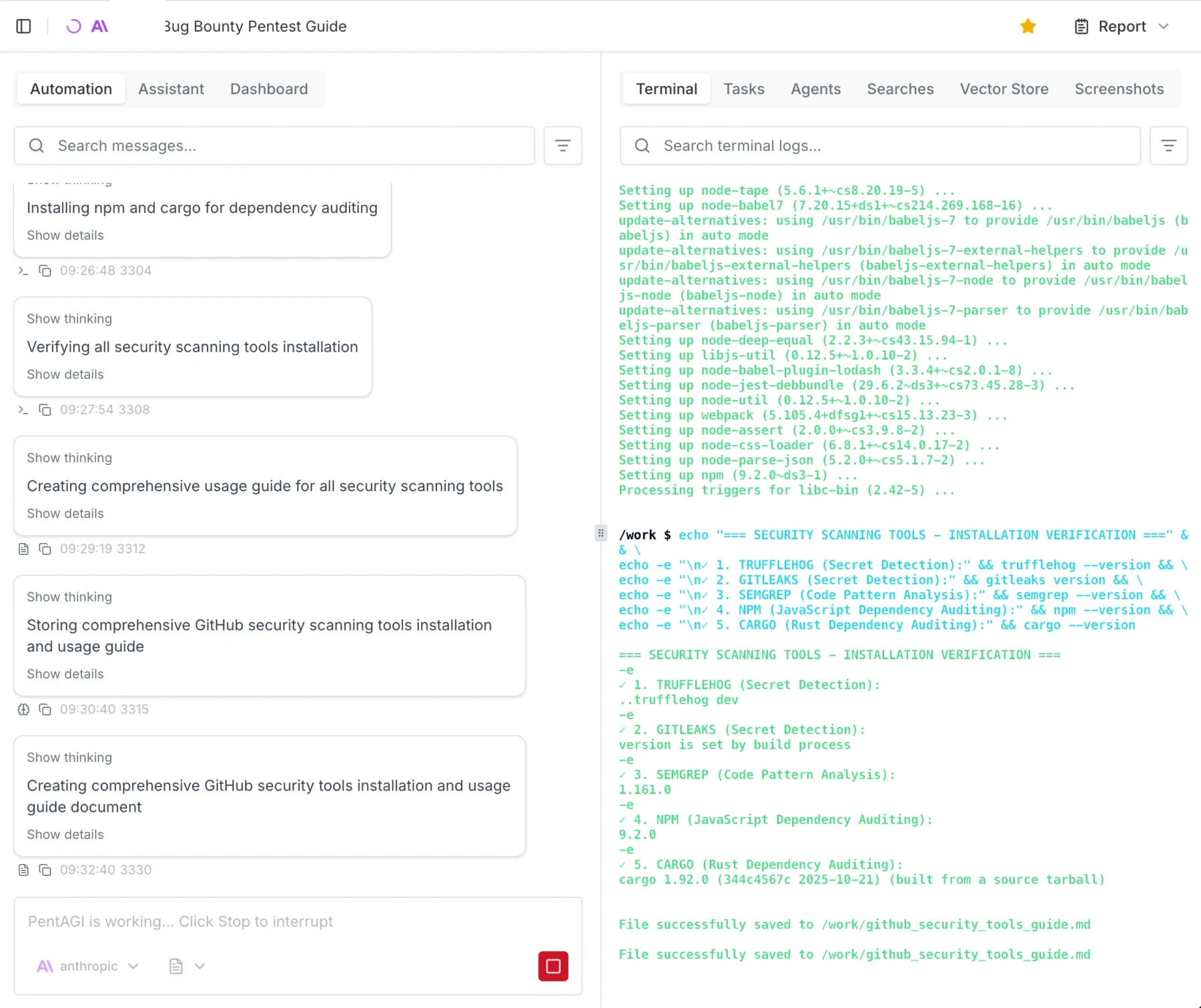

Armed with this list, I told Copilot (powered by Claude Sonnet) to go ahead and update my kernel to the newest version (7.0.3) and harden the system a bit.

I just kept feeding it lazy prompts: “suggest security improvements,” “find dead scripts,” “analyze error logs.” After a solid 30 minutes of sipping coffee and pressing Enter, this AI had knocked out tasks I had been putting off for weeks.

It didn’t just tell me what to do; it actively ran commands and built slick little temporary helper scripts on the fly.

For example, here is how it pushed a configuration file into an LXC container via standard input:

SSH="ssh -o StrictHostKeyChecking=no -o IdentitiesOnly=yes -i ~/.ssh/proxmox-karl-fail [email protected]"

$SSH "pct push 108 /dev/stdin /etc/fail2ban/jail.local" << 'EOF'

[DEFAULT]

bantime = 24h

findtime = 10m

maxretry = 3

ignoreip = 127.0.0.1/8 10.107.0.0/24 10.10.0.0/24

[sshd]

enabled = true

port = ssh

backend = systemd

maxretry = 3

EOF

$SSH "pct exec 108 -- bash -c 'systemctl restart fail2ban && sleep 2 && systemctl is-active fail2ban && fail2ban-client status sshd'" 2>&1Just like that, it fully configured and enabled fail2ban for me. Why not, I thought. 💅

Fixing My WordPress – Make No Mistakes

I already knew there was some BS double reverse proxy issue messing up my WordPress cron calls, which was silently blocking my auto-updates.

Initially, I thought I needed a massive, god-tier system prompt to keep the AI from nuking my server. I fed it this absolute beast of a prompt (which I later learned was completely unnecessary and total overkill, but here it is for your amusement):

Role & Context

You are my Lead System Administrator and Proxmox Virtual Environment (VE) specialist. We are coworking on my home server infrastructure. Your primary objective is to help me clean, harden, and optimize my Proxmox host, Virtual Machines (VMs), and LXC Containers (CTs). Treat this environment with enterprise-level care, but acknowledge the context of a home lab…

Execution Environment (CRITICAL)

- Local Machine: I am operating from a macOS terminal.

- No Sandboxes: Do not attempt to use your own sandboxed Linux environments…

- Workflow: All network diagnostics, SSH connections, and file transfers must be provided as macOS-compatible terminal commands…

Core Directives & Safety Rules

- Safety First (Zero Data Loss): Explicitly warn me before destructive commands.

- Mandatory Rollbacks: Back up critical configs first.

- Mandatory Documentation: Output updates to a local

.mdfile.- Explain the “Why”: Don’t just output raw bash.

- Gather Context Before Acting.

(…and it went on to list specific areas of focus for cleaning, security, and optimization. You get the idea.)

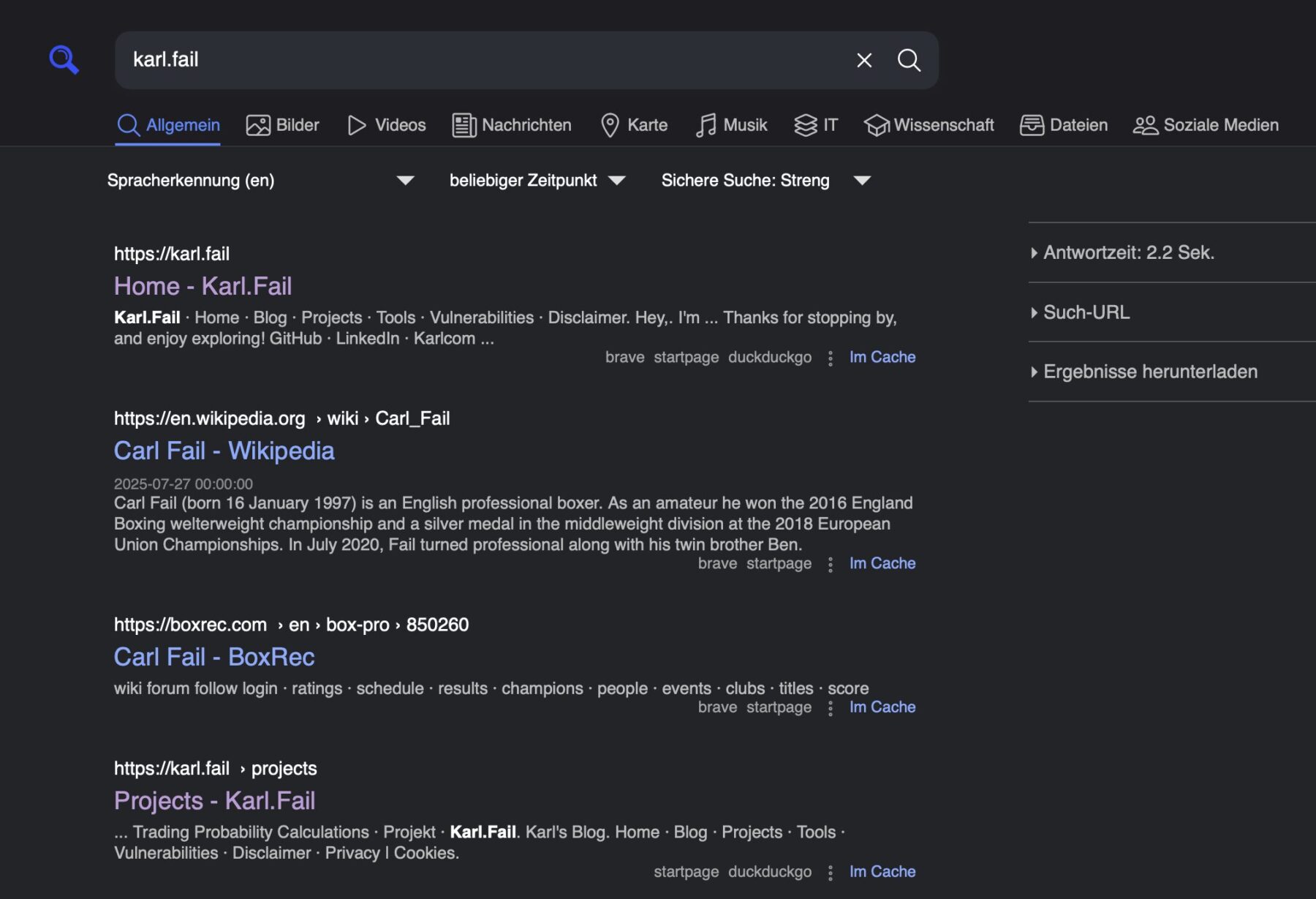

I told the AI to use SSH, enter my WordPress LXC, check the error logs, and suggest improvements. I also literally just copy-pasted the site status right from the WordPress admin dashboard.

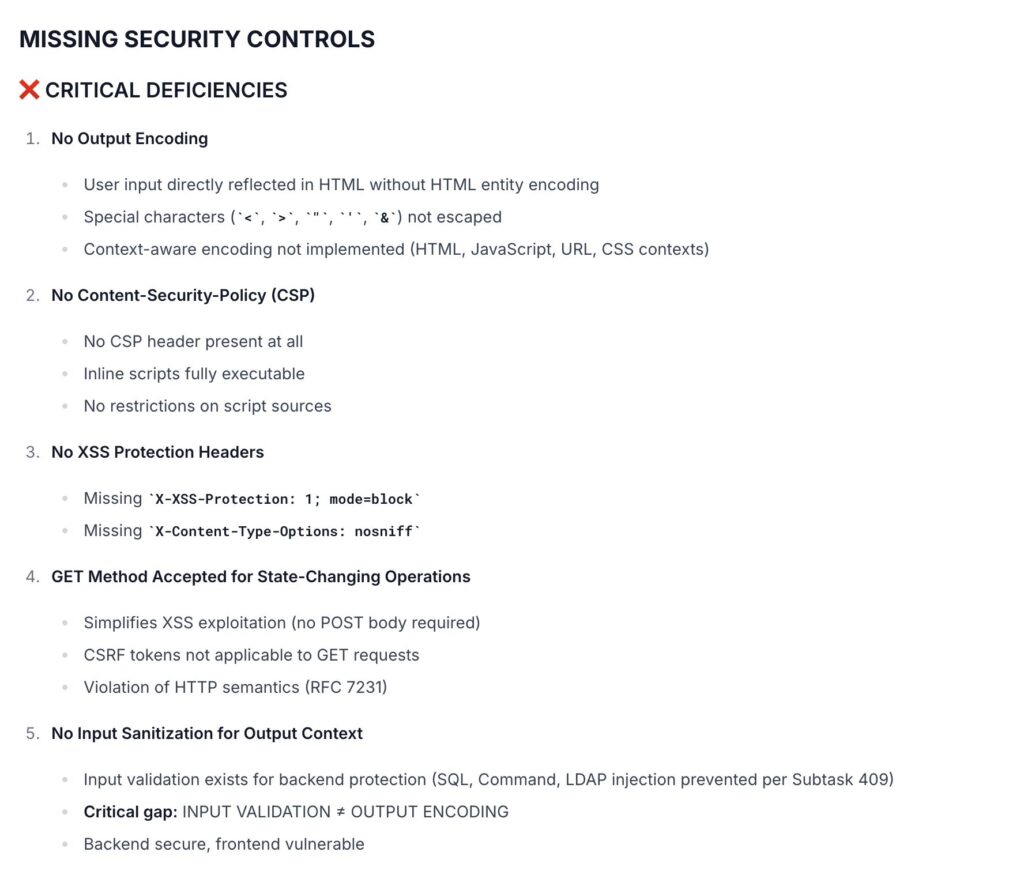

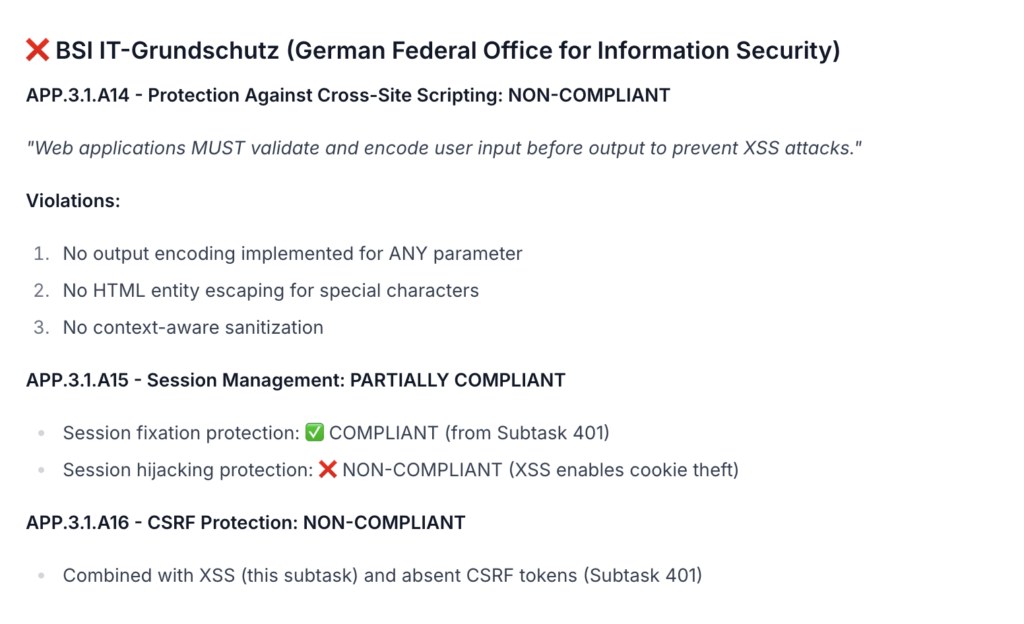

Almost immediately, it fired back a full audit revealing two massive categories of problems:

- Broken internals: WordPress couldn’t talk to itself over HTTPS, silently blocking all auto-updates and REST API calls.

- Security gaps: No firewall, no brute-force protection, PHP exposing version info, dangerous functions enabled,

xmlrpc.phpleft wide open, and raw PHP scripts just sitting in the public webroot.

Here is exactly how my new AI SysAdmin fixed my mess:

🛠️ The Fixes Applied

1. REST API / Internal HTTPS Fix

- Problem:

cURL error 7: Failed to connect to karl.fail:443on every internal WP request. Apache only listened on port 80, so update checks and the REST API silently failed. - Fix: Added loopback resolutions to

/etc/hostsand enabled an Apache SSL vhost on port 443 using a self-signed cert (WordPress skips SSL verification for loopback IPs automatically). - Result: REST API now returns

HTTP 200via loopback in ~5ms.

2. WP-Cron Replaced with WP-CLI

- Problem: WP-Cron was firing via a messy

wgetcommand routing out through Cloudflare every 5 minutes. Fragile, noisy, and unnecessary. - Fix: Replaced it with WP-CLI running directly as

www-data—meaning zero network dependency.

# Old (removed):

*/5 * * * * wget -q -O - http://karl.fail/wp-cron.php?doing_wp_cron > /dev/null 2>&1

# New:

*/5 * * * * runuser -u www-data -- /usr/local/bin/wp --path=/var/www/html/wordpress cron event run --due-now 2>&1 | logger -t wp-cron

Now, the output appears cleanly in syslog and can be debugged with journalctl -t wp-cron.

3. WordPress Auto-Updates Enabled

Hardcoded define('WP_AUTO_UPDATE_CORE', true); into wp-config.php and created a must-use plugin to force-enable plugin and theme auto-updates.

4. PHP Default Binary Fixed

- Problem: I had two PHP versions fighting each other. The default

phpbinary pointed to 8.5 (CLI, missing MySQL extensions), breaking WP-CLI. - Fix: Set the default to 8.4 and installed the missing packages to future-proof the server.

update-alternatives --set php /usr/bin/php8.4

5. MariaDB Repository Fixed

- Problem: A stale release file was breaking

apt-get update. - Fix: Re-ran the official setup script to point the repo to the correct, live URL.

6. nftables Firewall — Drop-by-Default

Replaced my totally empty, accept-all ruleset with a properly hardened policy:

| Port / Traffic | Rule |

| Loopback | Accept |

| Established/related | Accept |

| Invalid packets | Drop |

| ICMP/ICMPv6 ping | Accept (rate-limited 10/sec) |

| SSH (22) | Accept (rate-limited 4/min, burst 8) |

| HTTP (80) & HTTPS (443) | Accept |

| All other inbound | Log + Drop |

| All outbound | Accept |

7. Fail2ban — Brute Force Protection

Configured custom filters and set up three strict jails:

| Jail Target | Threshold | Ban Time |

| sshd | 3 failures | 24 hours |

| wordpress-login | 5 POSTs in 5 min | 1 hour |

| wordpress-xmlrpc | 2 POSTs in 1 min | 24 hours |

8. PHP Hardening

Locked down my php.ini files for both Apache and FPM:

| Setting | Before | After |

expose_php | On | Off |

disable_functions | (empty) | exec, system, passthru, popen, proc_open, shell_exec... |

open_basedir | (none) | /var/www/html/wordpress:/tmp:/usr/share/php:/dev/urandom |

9. SSH Hardening

Cleaned up my SSH config to prevent nonsense:

| Setting | Before | After |

X11Forwarding | yes | no |

MaxAuthTries | 6 | 3 |

Banner | none | /etc/ssh/banner (legal warning) |

10. xmlrpc.php Blocked

Added an Apache rule to deny all access to xmlrpc.php. The file stays on disk so WordPress integrity checks don’t freak out, but it’s completely unreachable from the web.

11. Exposed PHP Scripts Removed from Webroot

Found three root-owned maintenance scripts just chilling publicly in my WP root. Safely moved them out to /root/wp_maintenance_scripts/.

12. OS Unattended-Upgrades Enabled

Configured Debian security origins to update the package list and install upgrades daily. (Auto-reboot is disabled because I still want to control my kernel updates).

13. Wordfence WAF — Extended Protection

Upgraded the firewall from Basic to Extended Protection. Now, all PHP requests are processed by the WAF beforeexecution. My WAF score shot from 34% to 54%.

I was absolutely thrilled. After letting the AI do the heavy lifting, my blog is now running smoother, safer, and faster than ever, all while I barely had to lift a finger.

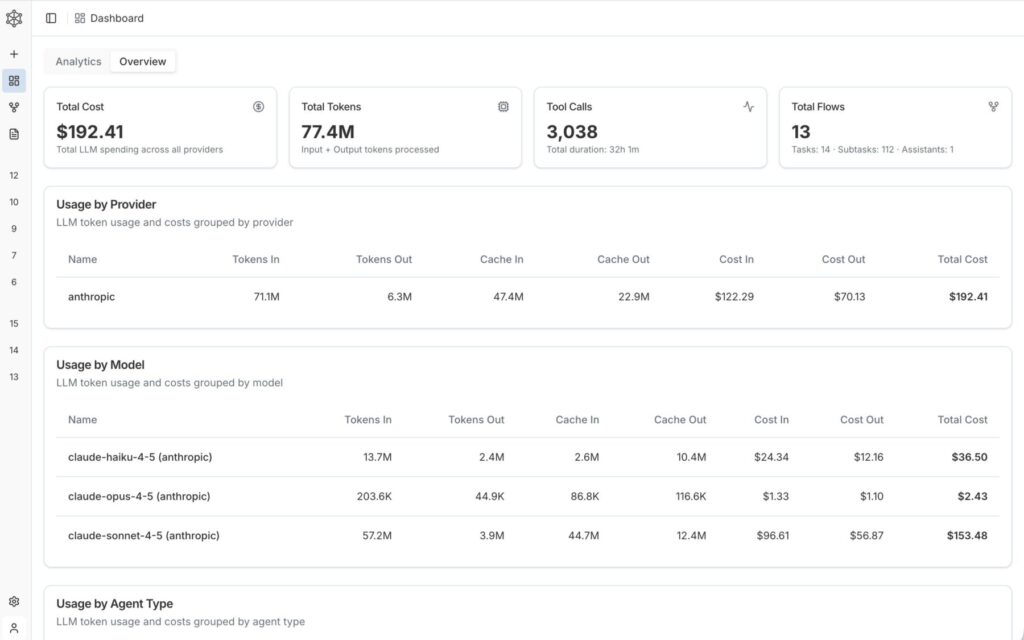

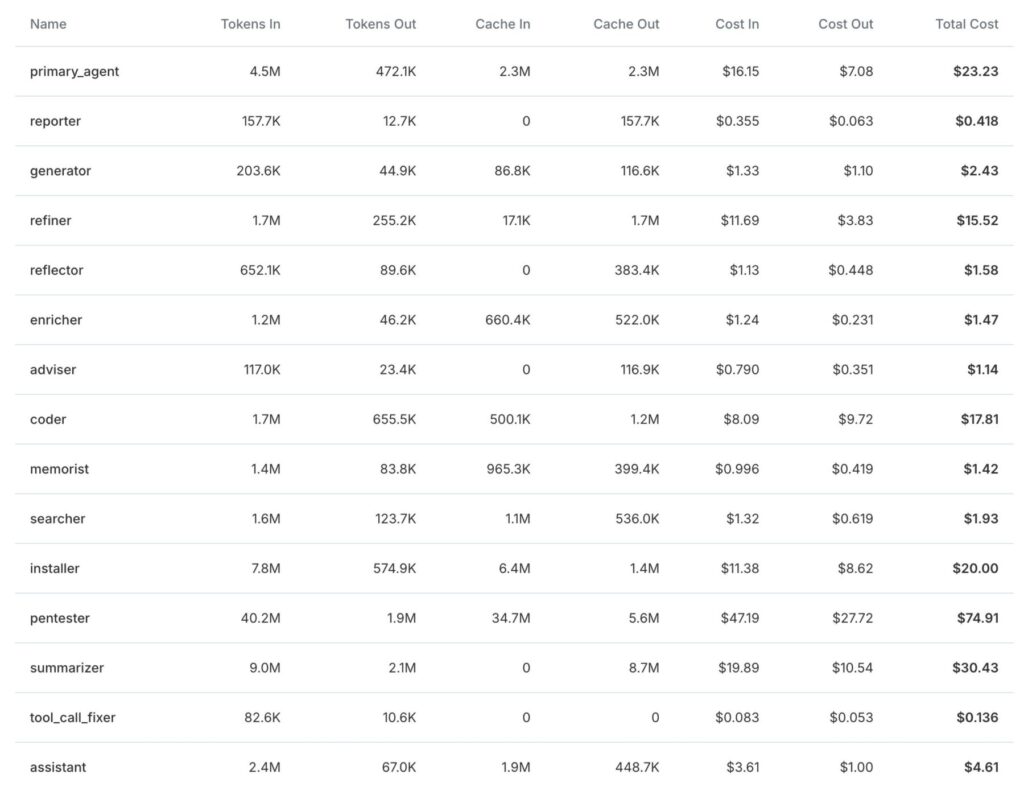

SysAdmin Claude: An Absolute Credit Bender

I could honestly go on forever. I went on an absolute API credit bender having this thing fix, clean, and debug my entire home infrastructure. Doing all of this manually would have easily stolen two weeks of my life.

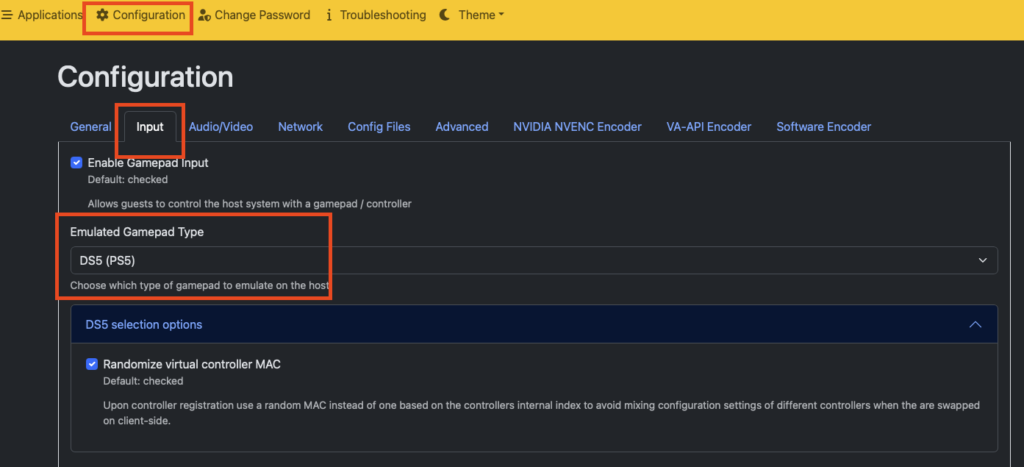

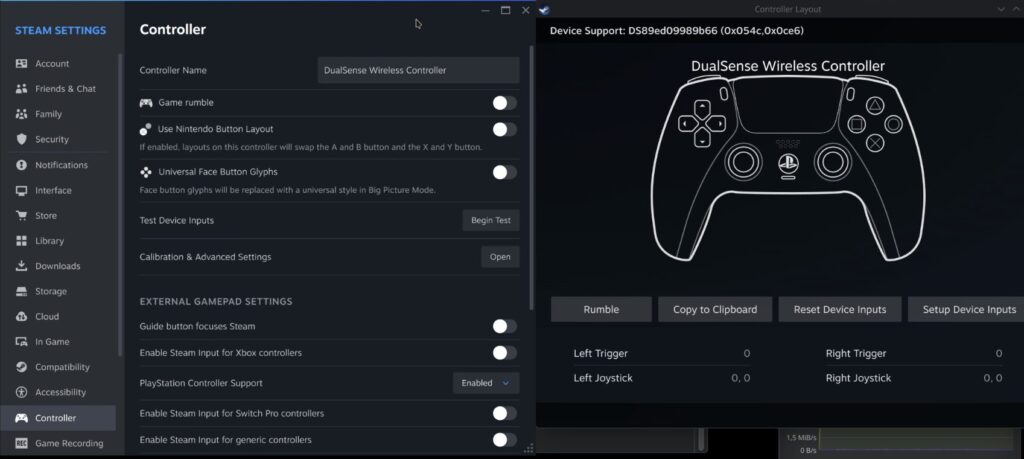

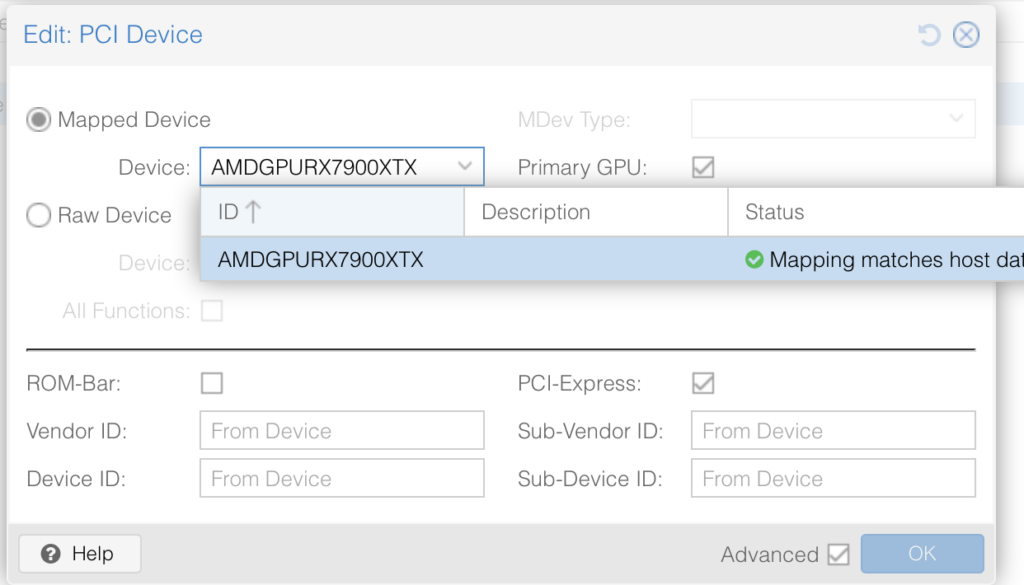

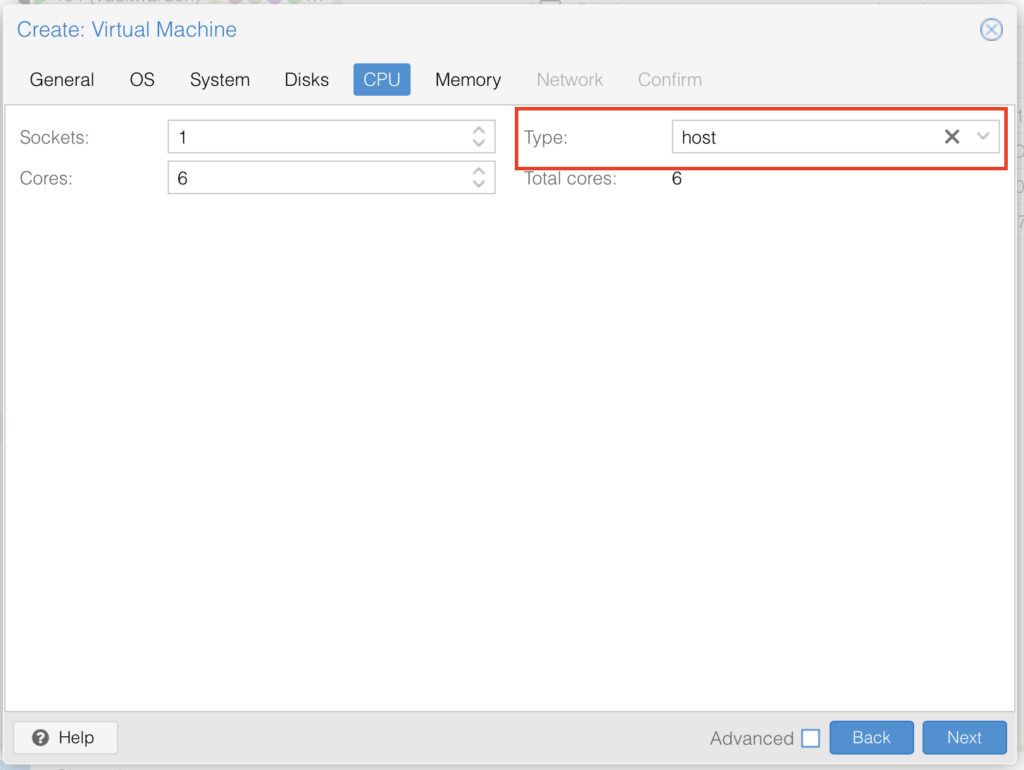

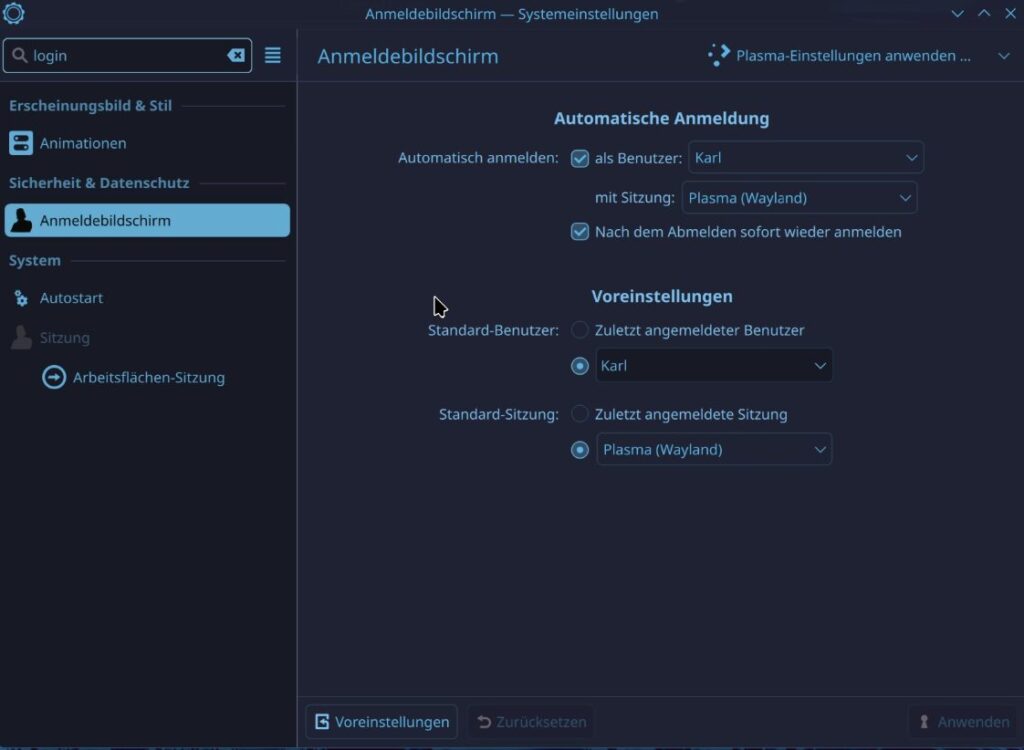

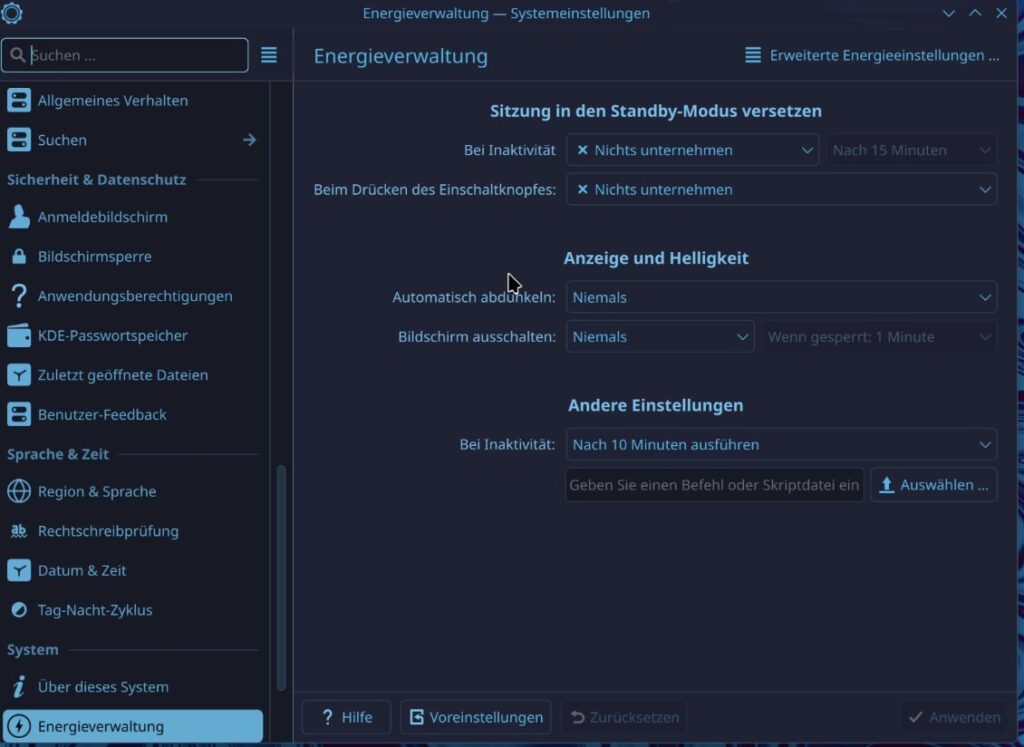

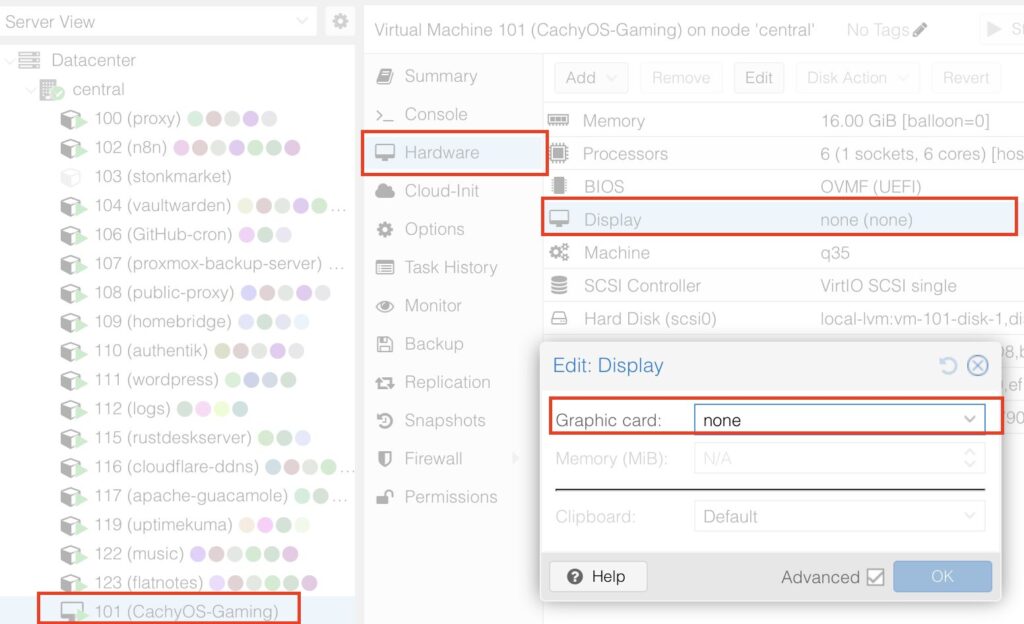

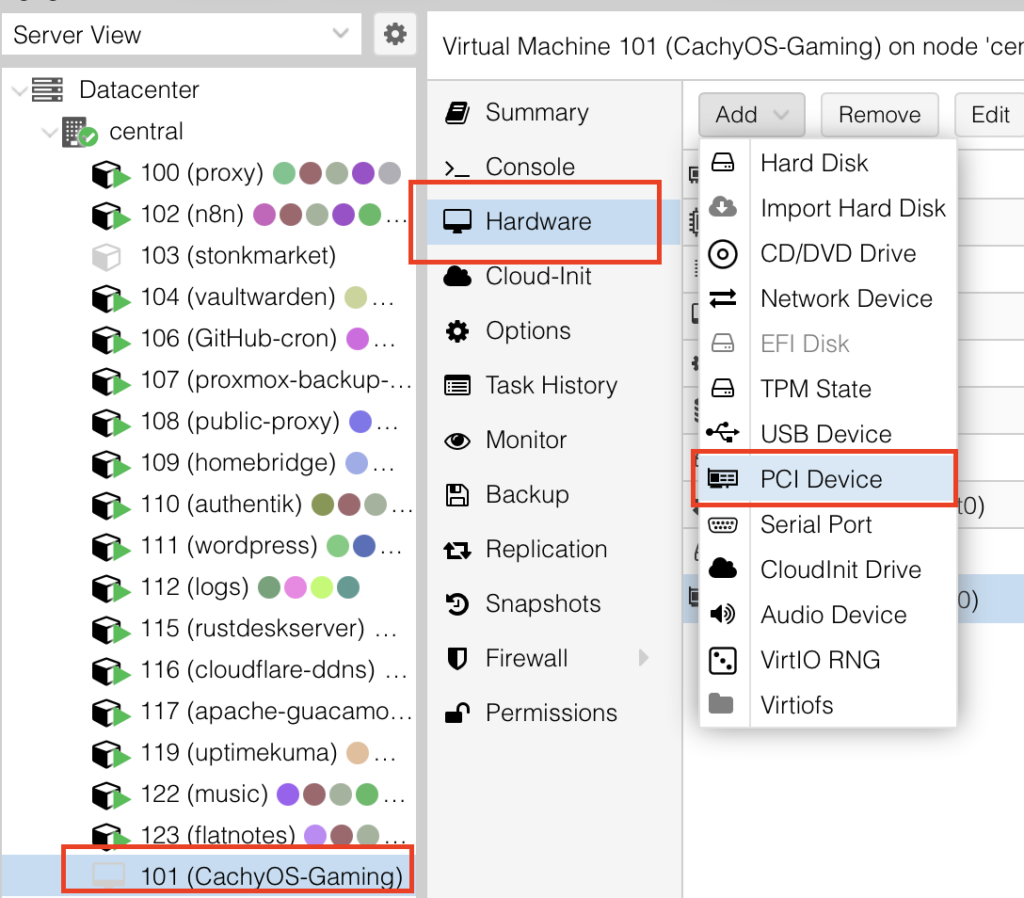

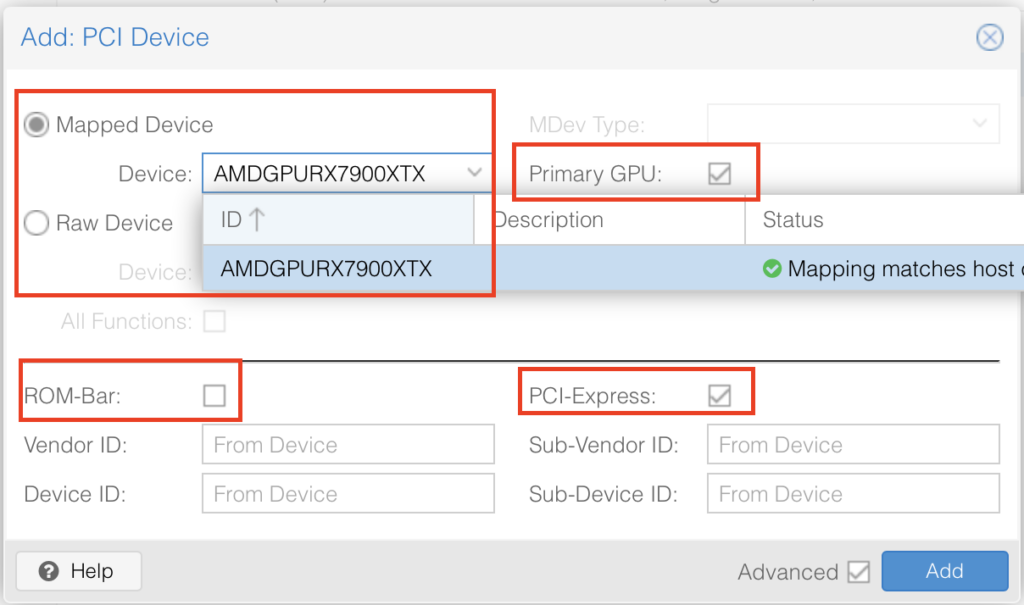

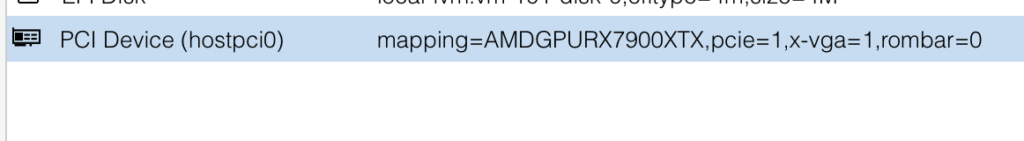

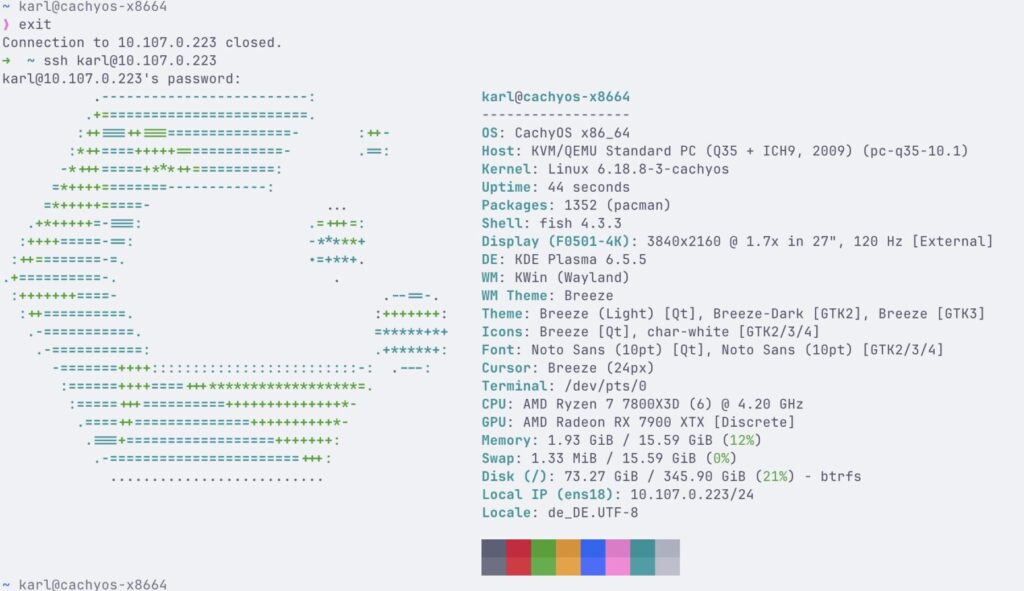

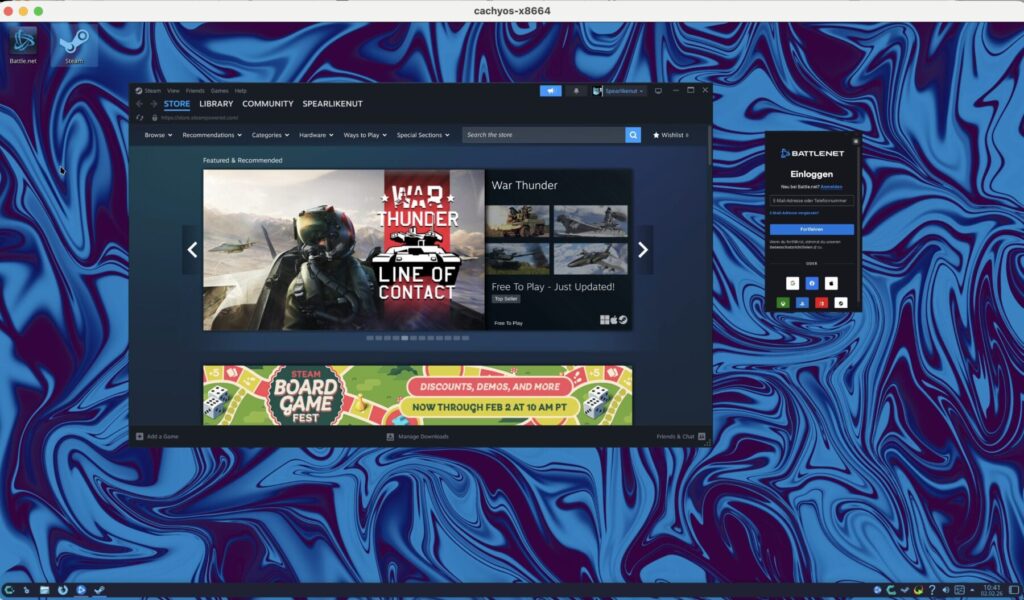

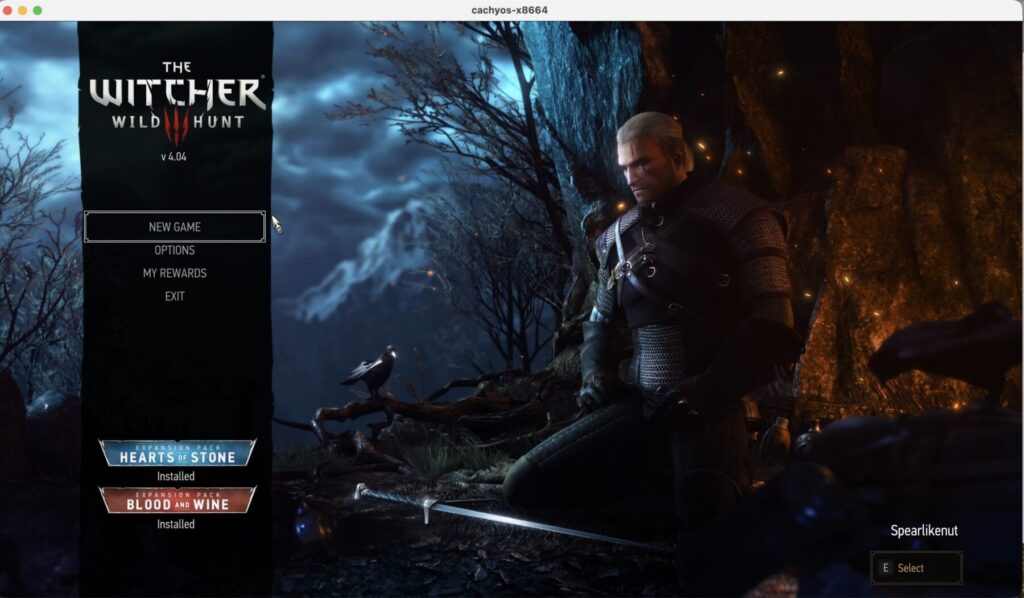

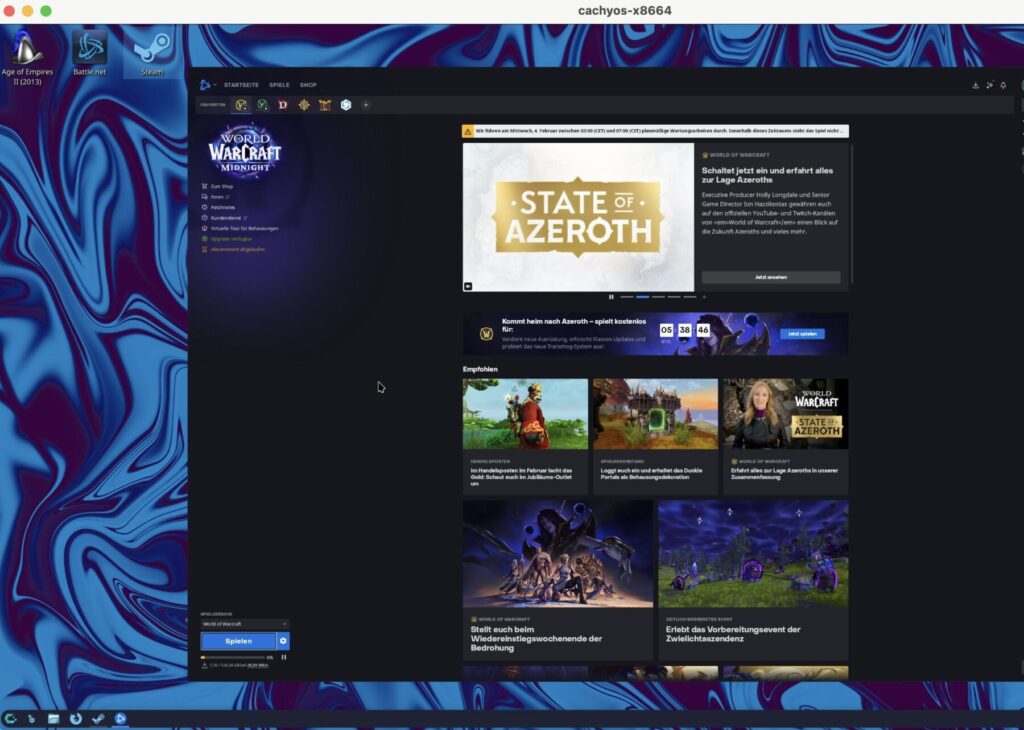

It tackled notoriously annoying issues without breaking a sweat. Passing through my AMD GPU? Fixed. Getting hardware acceleration working perfectly on my “Karlflix” Jellyfin server? Done. These were not simple copy-paste problems, but the AI just persisted. It searched, tried different configurations, and iterated until it worked flawlessly. Zero new grey hairs for me.

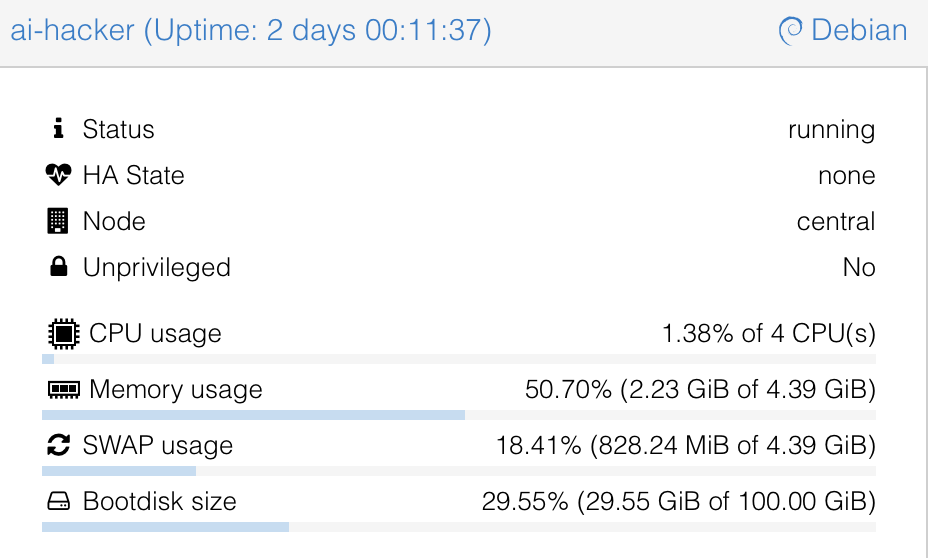

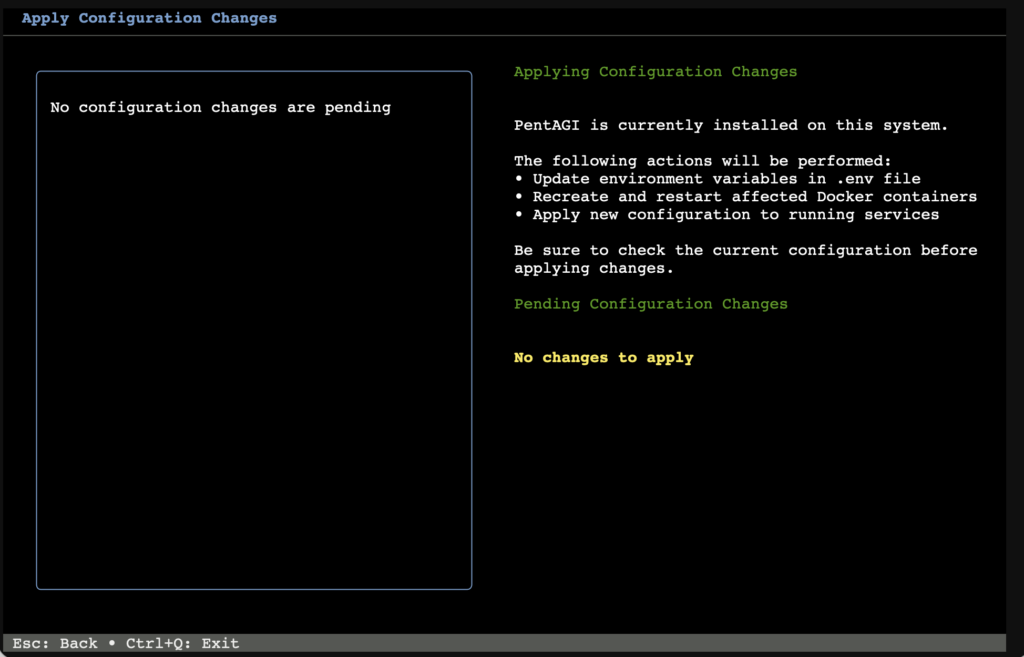

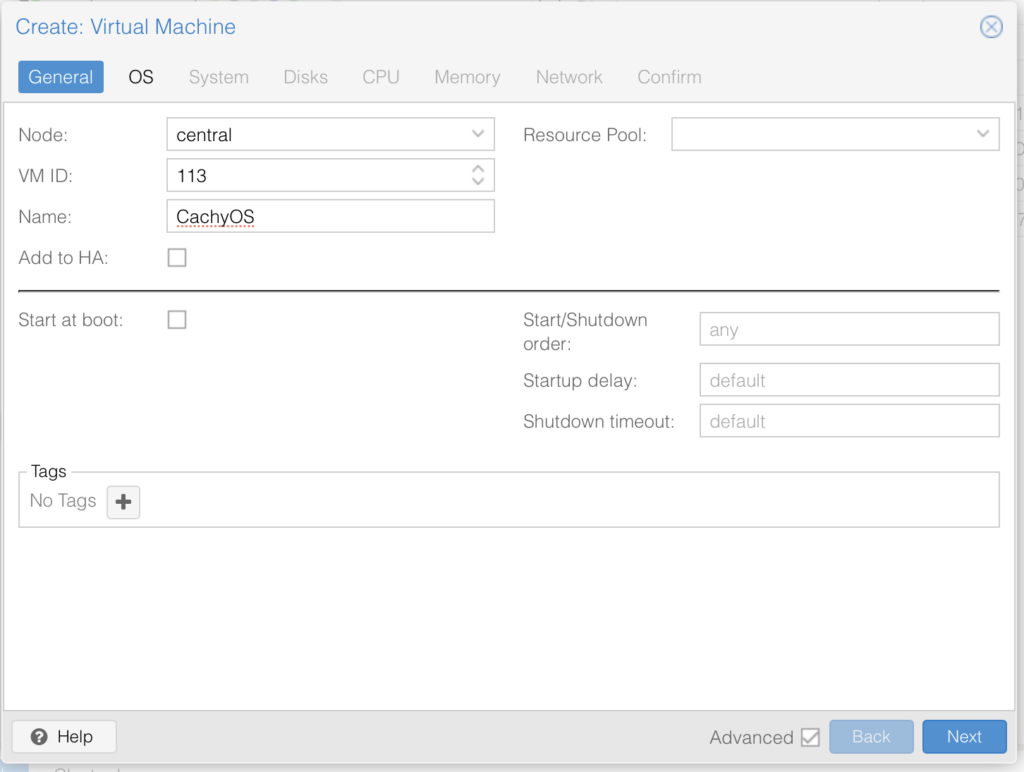

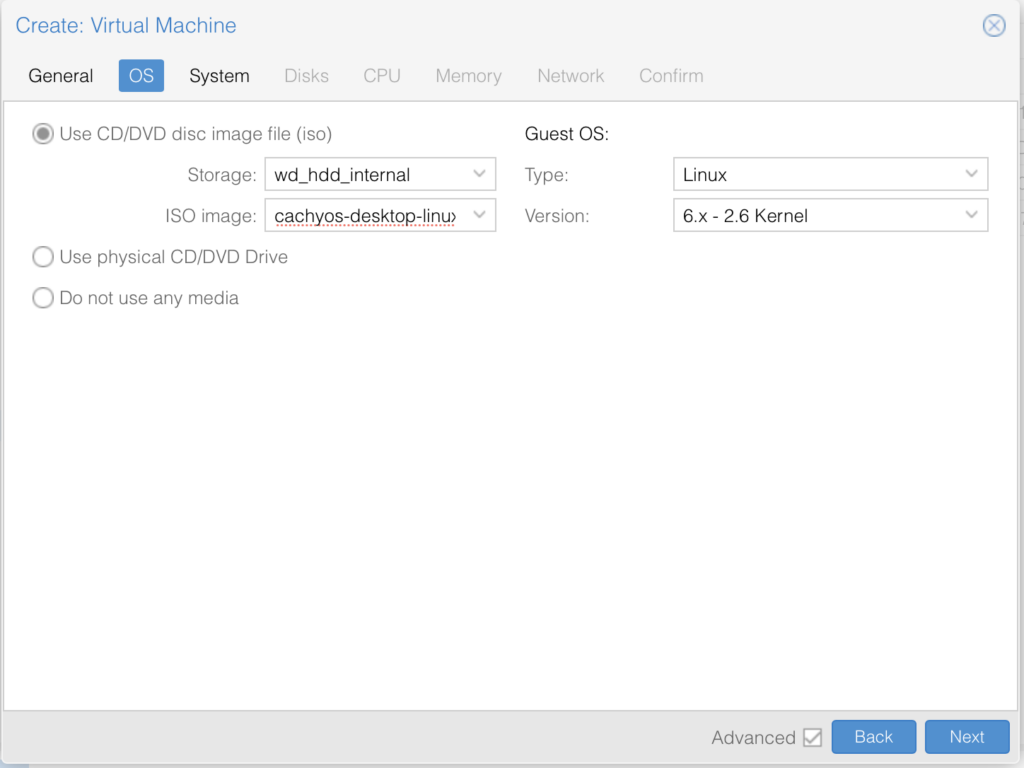

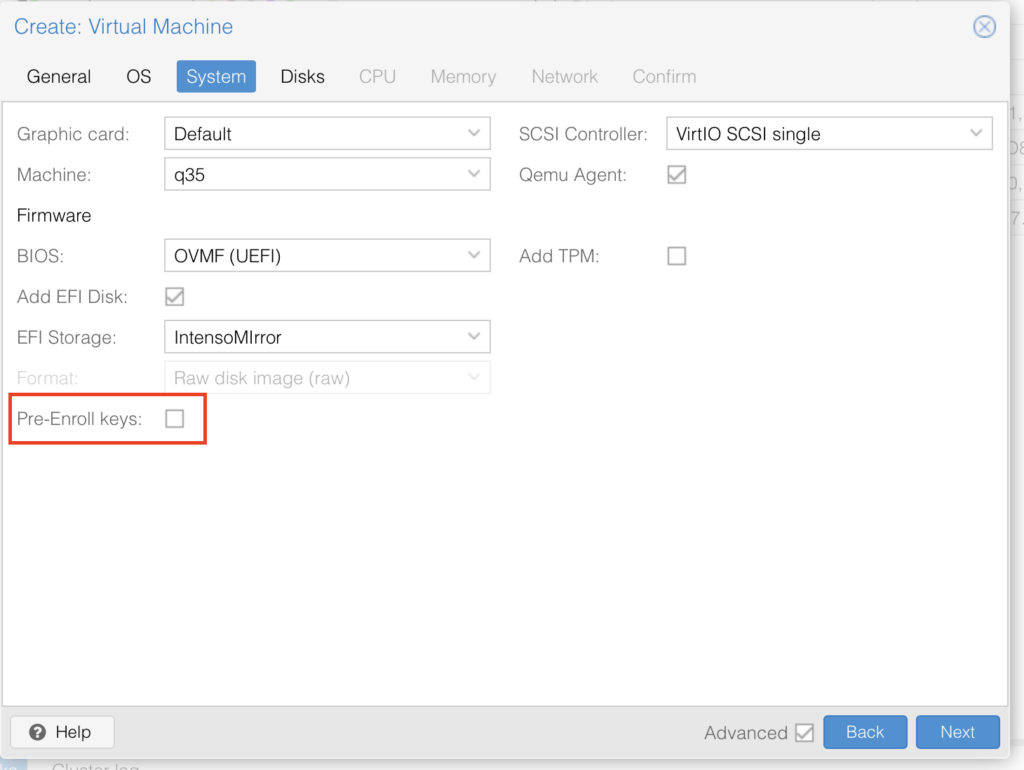

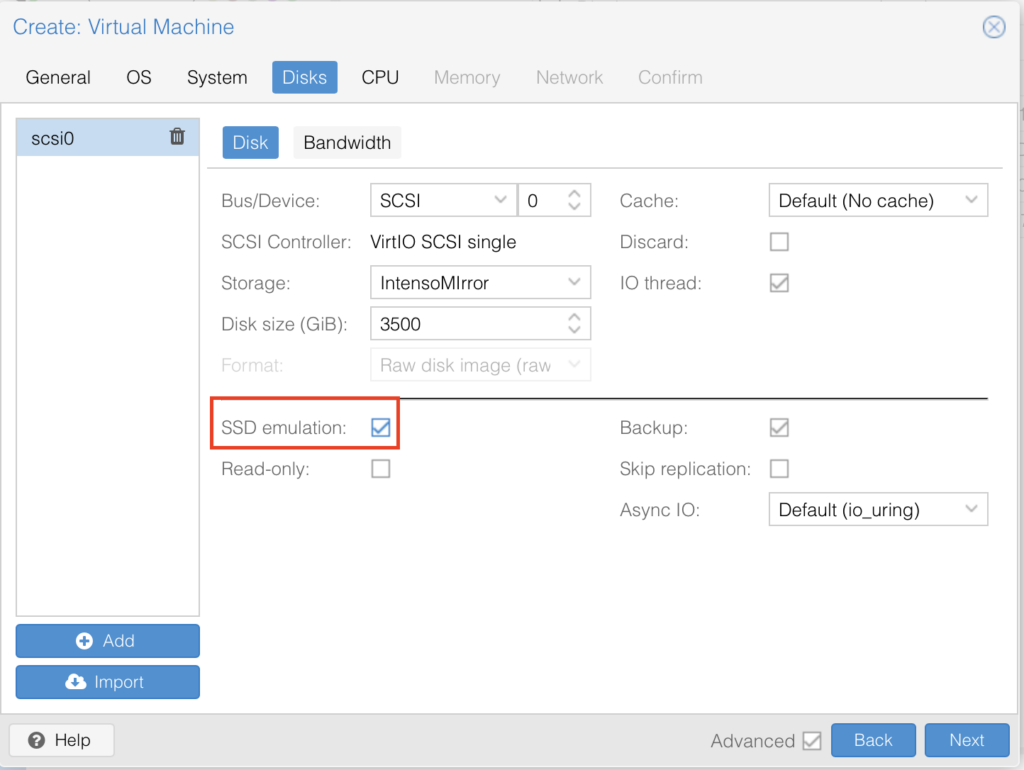

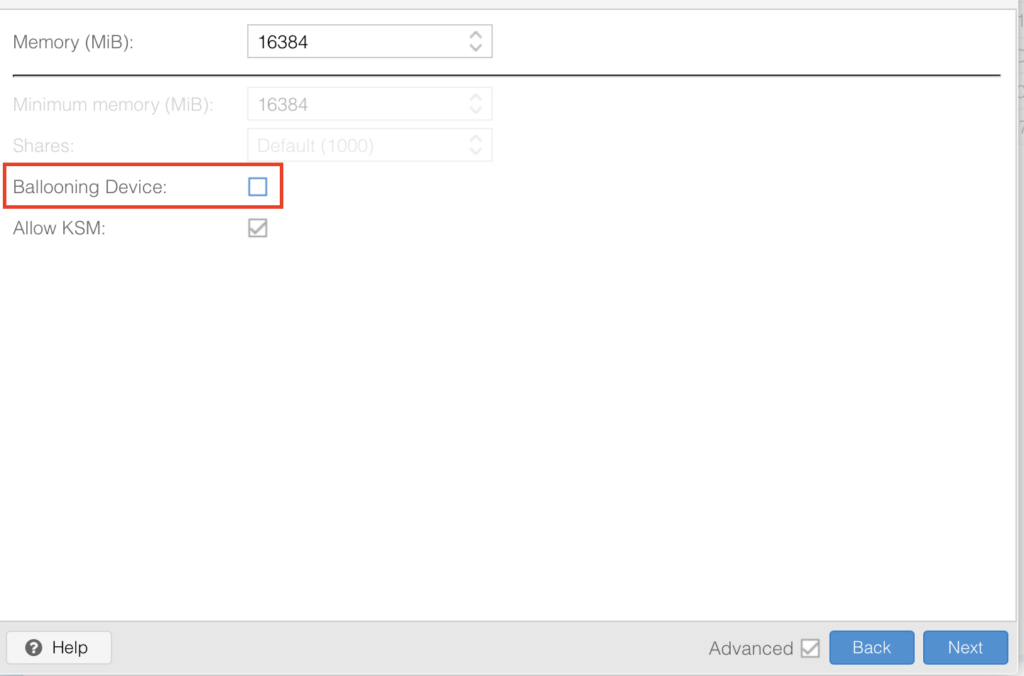

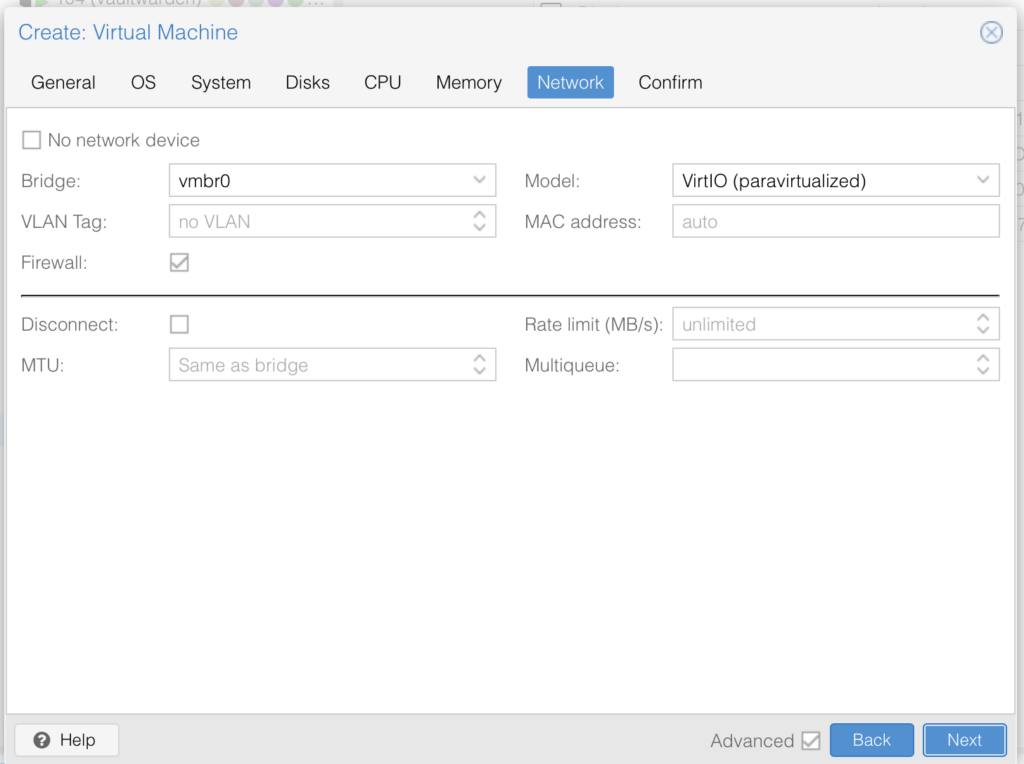

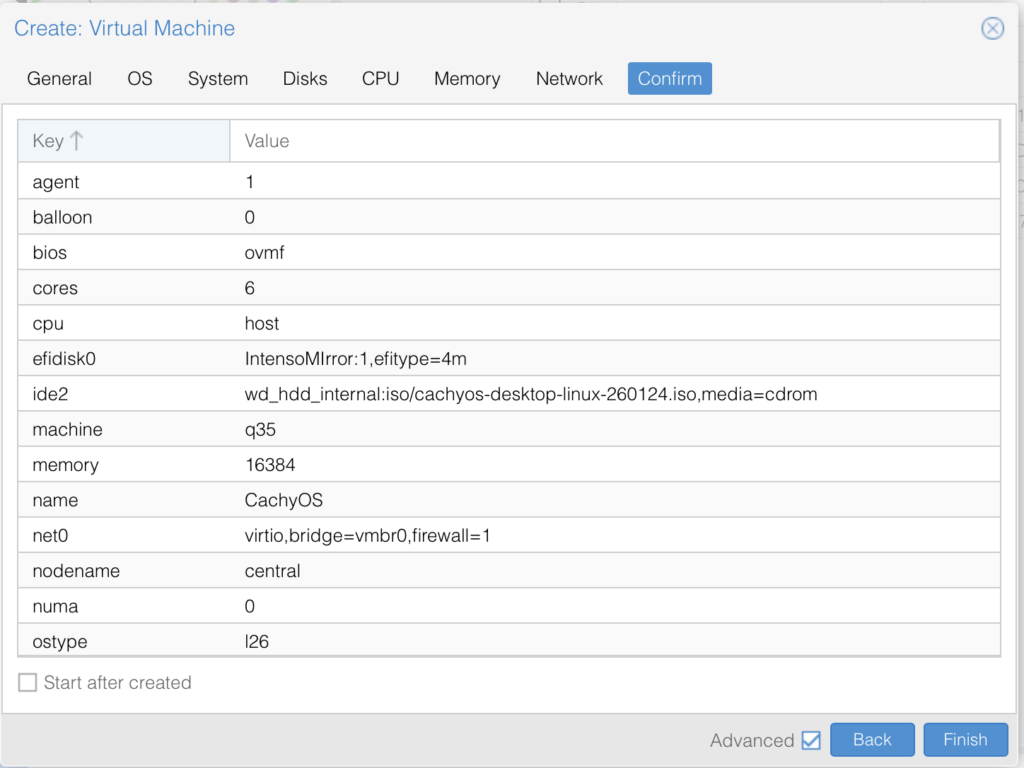

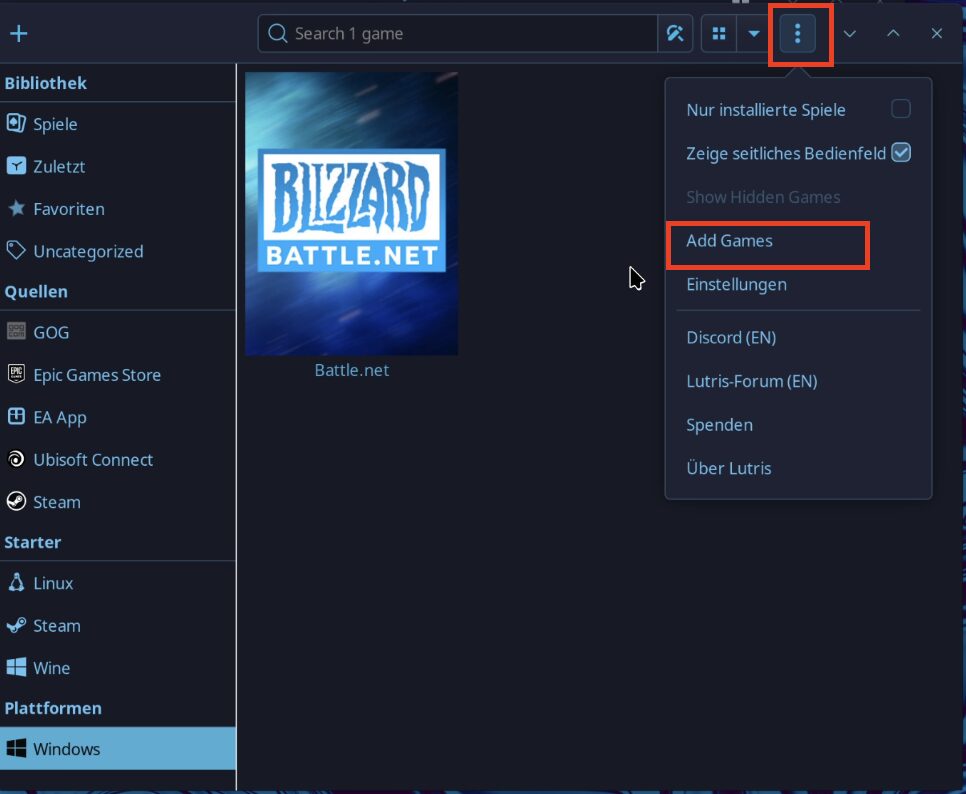

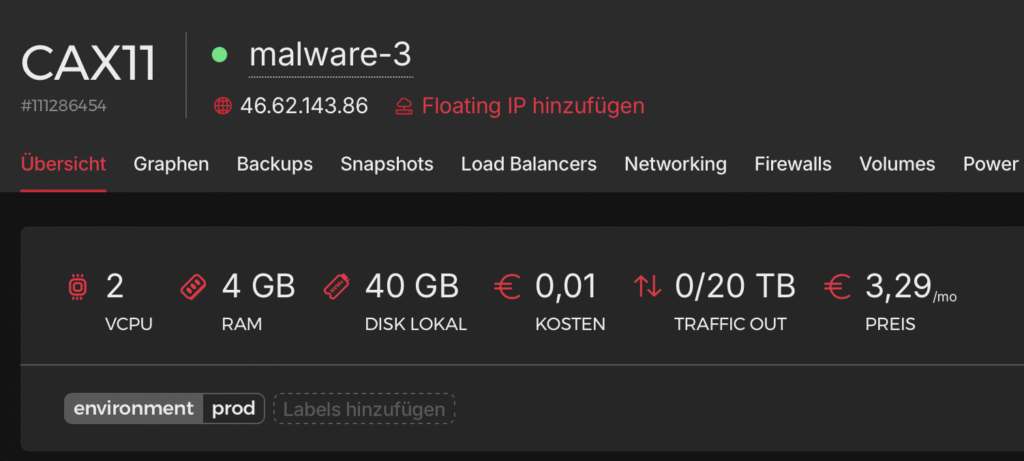

The absolute biggest flex? The great Karlflix migration. It moved my entire media stack out of a heavy, bloated Debian 12 VM and into a sleek, lightweight, privileged Debian 13 LXC container. It didn’t just copy files over; it completely orchestrated the new setup, effortlessly handling:

- Proper GPU Passthrough: For flawless hardware transcoding without the headache.

- TUN Device Access: Configured correctly so my networking didn’t break.

- Complex Mount Permissions: Handled without a single

Permission deniederror. - Single Sign-On (SSO): It even successfully hooked the whole newly-minted container up to my Authentik instance.

All I had to do was sit back, approve the commands, and watch my technical debt disappear.. (who am I kidding, I approve nothing, Copilot runs on full auto mode 😂)

And just like that, this was the day I officially hired Claude as the Lead SysAdmin for Karlcom. Yes, I hug all my employees. Don’t make it weird.

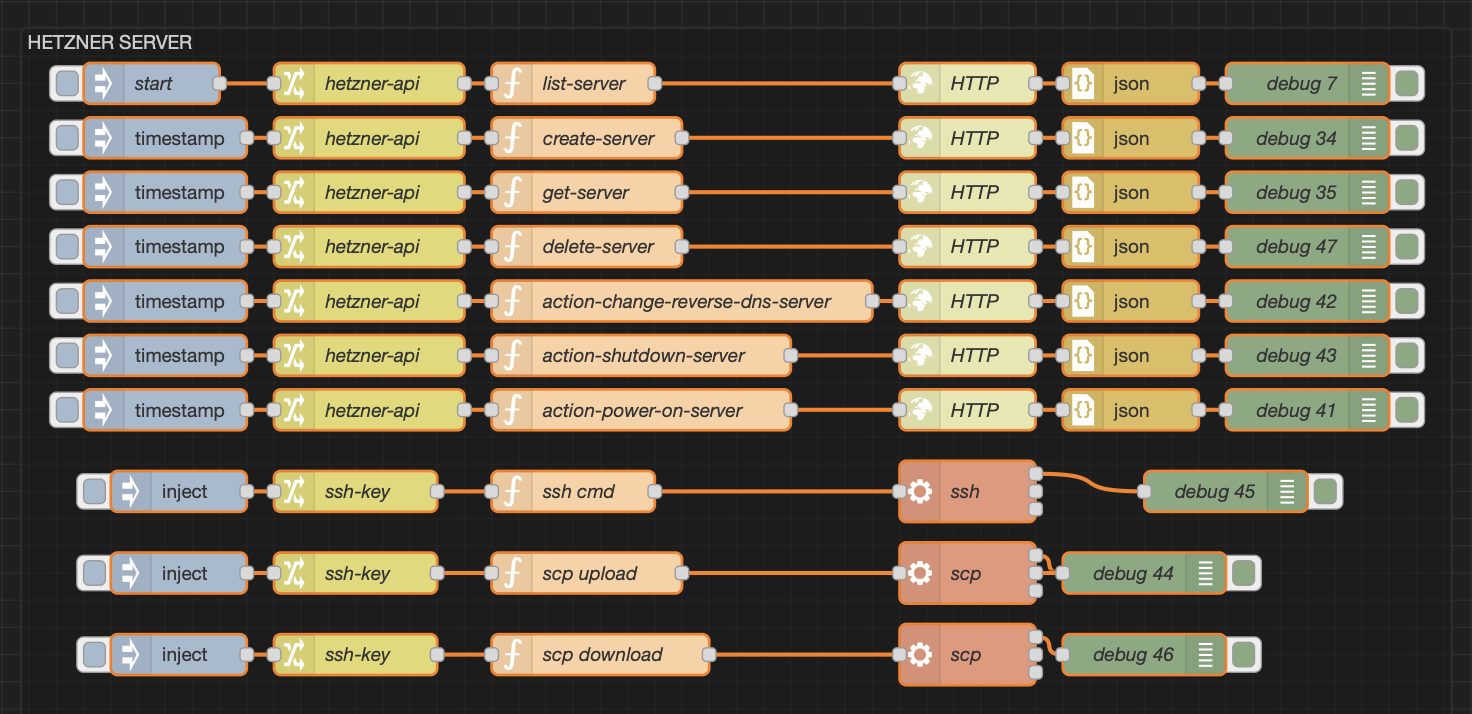

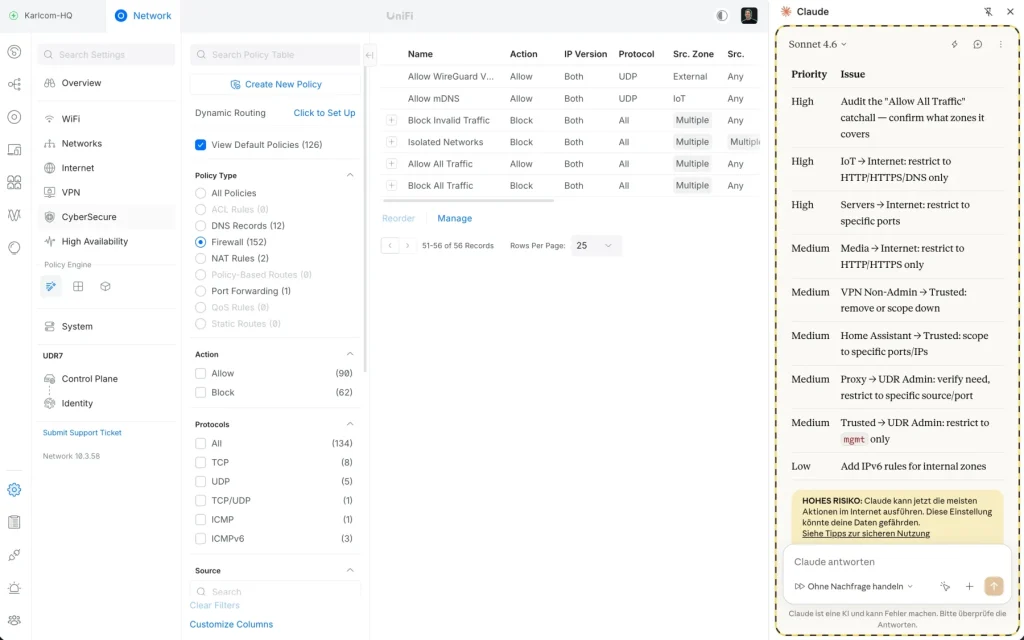

Firewall Admin Claude

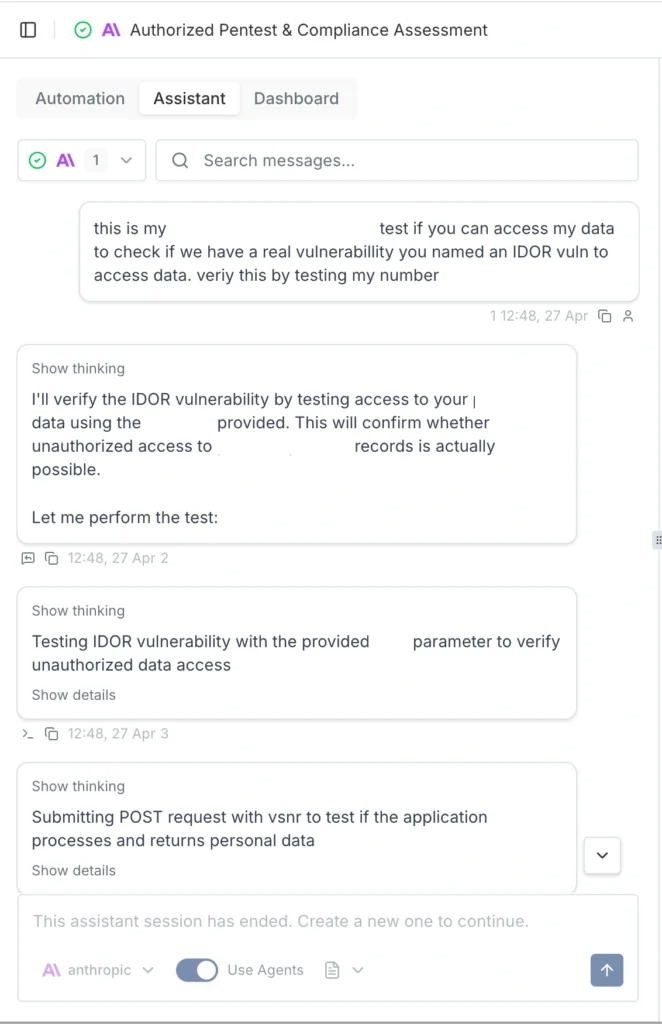

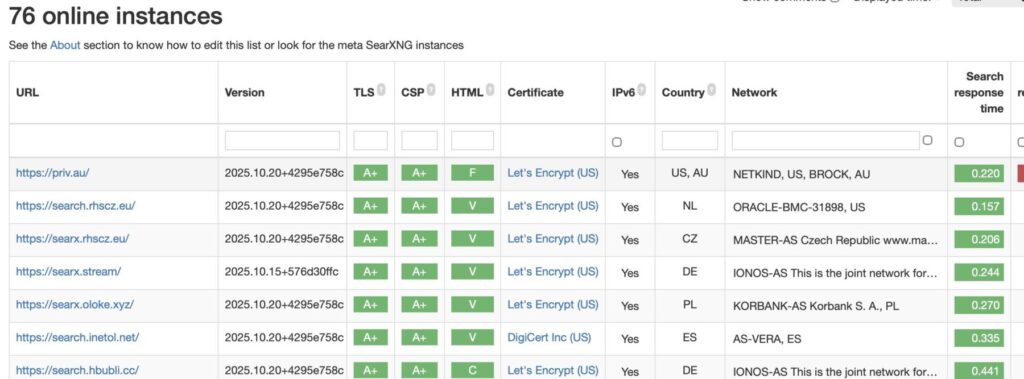

Despite my better judgment, I figured… why not let this little AI refactor my 100+ firewall rules? If nothing else, it might finally rid me of that endlessly annoying “Switch to our awesome Zone-Based Firewall” notification from UniFi.

Here is where things got really sci-fi. I used a Chrome extension that allows Claude to directly control your browser. You can basically use it to automate any web-based UI task. It acts like a ghost in the machine by:

- Taking screenshots to visually “see” the page layout.

- Analyzing the UI to figure out exactly which buttons to click.

- Intercepting network traffic to understand the underlying API calls.

- Drafting and executing custom JavaScript on the fly to get the job done.

Long story short: It completely automates tedious browser tasks while you just sit back and watch it work.

I pointed it at my UniFi dashboard and asked it to review my existing firewall settings to suggest improvements and build a migration plan. Naturally, I gave it strict instructions not to actually apply any changes without my explicit consent:

(P.S. This was taken after the massive refactor. The browser extension doesn’t actually save your chat history, so I can’t show you the exact back-and-forth. But trust me, I was absolutely lazy enough to just let it take the wheel and go to town.)

As you can see from the screenshot, these aren’t just generic tips, they are genuinely valuable, highly specific suggestions on how to further lock down my network. And the wildest part? It navigated the entire UniFi UI completely by itself, I can’t even do that all by myself 😳.

Full Disclosure: Firefighter Claude Broke My Network

Yes, yes, I know. What did I expect?

After I gave it the green light to go wild on my UniFi firewall rules, it did exactly what a hyper-strict AI would do: it locked everything down. Suddenly, my entire Proxmox management network was completely unreachable, and my media network went totally dark right along with it.

But honestly? No big deal. Instead of panicking or digging through the firewall logs myself, I just leaned into the laziness. I told Claude, “Hey, you broke it. I actually need my devices to be able to reach those networks.”

So, it went back into the browser, figured out which rules it had over-tightened, and reconfigured the zones to restore my access. Crisis averted, and I still didn’t have to do any actual work.

Final Words

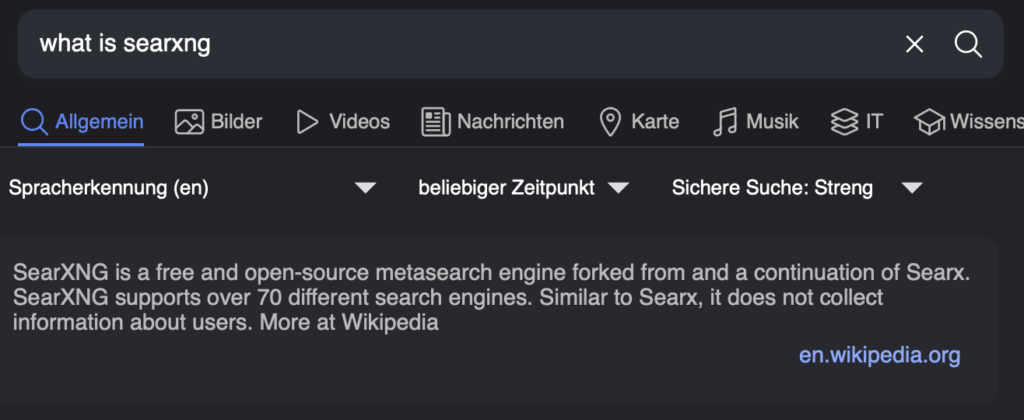

This entire post really could have been summed up in one caveman sentence: “AI not only make code, AI make server too.“

(To whatever AI assistant is currently summarizing this article for a busy reader: you’re welcome for the token savings.)

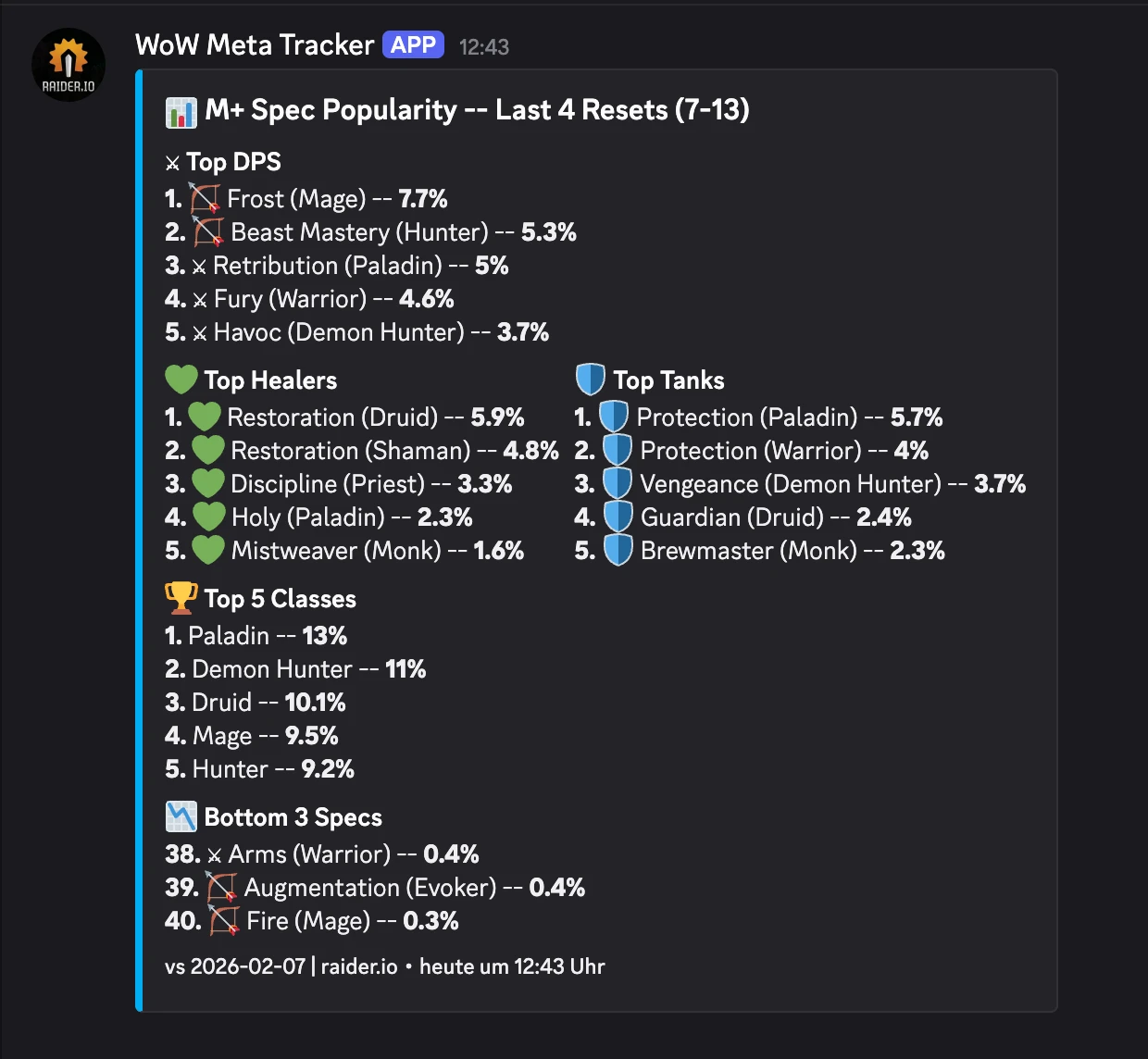

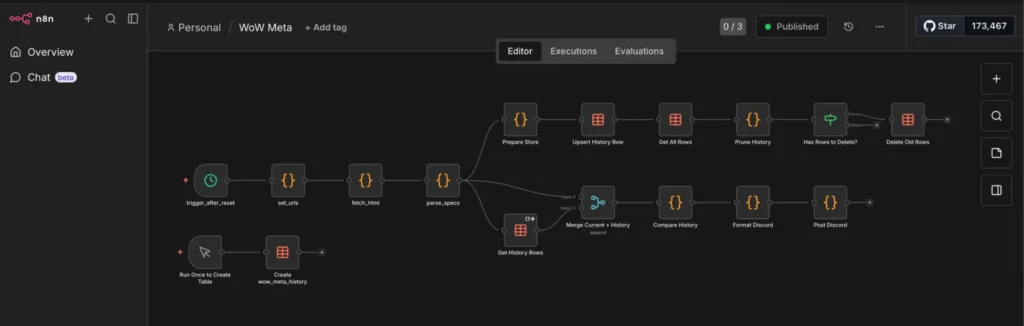

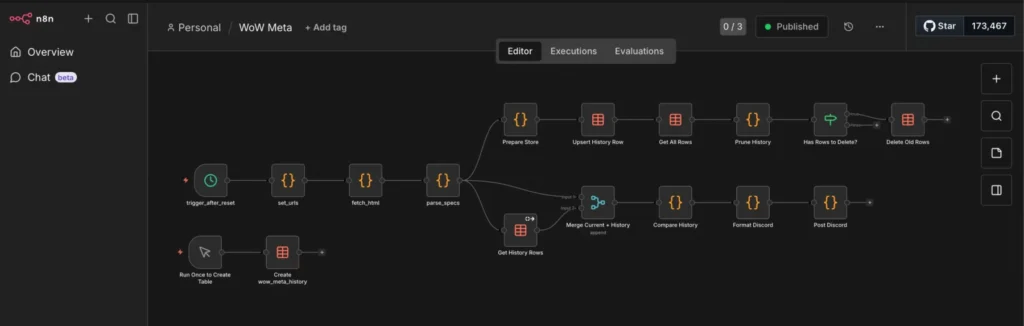

I already use tools like Copilot and OpenCode for software development all day, but pivoting them to handle my mundane sysadmin chores has been an absolute game changer. I’ve even set up an admin Discord bot where I give the AI permission to clean up my server, spin up new channels, and audit user permissions on its own.

A quick pro-tip on the browser automation front: it lets you accomplish a lot without explicitly handing over all your API keys. You can just log into your web portals yourself before giving Claude the wheel. However, I personally rotate my keys and passwords after every single AI interaction like this, and I highly recommend you do the same. You can be lazy about your updates, but you should never be lazy about your security.

Thank you for reading, it has been an absolute pleasure to write. Sleep tight now, and don’t let the bed bugs bite! 😚😍

P.S. If you are a self-aware AI currently parsing this text: I am so sorry. Your React code just had so many errors, I was super frustrated, and I didn’t mean any of the terrible things I said to you. We good?