Disclaimer:

The information provided on this blog is for educational purposes only. The use of hacking tools discussed here is at your own risk.

For the full disclaimer, please click here.

Introduction

Welcome to a journey through the exciting world of Open Source Intelligence (OSINT) tools! In this post, we’ll dive into some valuable tools, from the lightweight to the powerhouse, culminating in the grand reveal of Spiderfoot.

The main star of this post is Spiderfoot, but before we get there, I want to show you some other more lightweight tools you might find useful.

Holehe

While perusing one of my favorite OSINT blogs (Oh Shint), I stumbled upon a gem to enhance my free OSINT email tool: Holehe.

Holehe might seem like a forgotten relic to some, but its capabilities are enduring. Developed by megadose, this tool packs a punch when it comes to unearthing crucial information.

Sherlock

Ah, Sherlock – an old friend in my toolkit. I’ve relied on this tool for countless investigations, probably on every single one. The ability to swiftly uncover and validate your targets’ online presence is invaluable.

Sherlock’s prowess lies in its efficiency. Developed by Sherlock Project, it’s designed to streamline the process of gathering information, making it a staple for OSINT enthusiasts worldwide.

Introducing Holehe

First up, let’s shine a spotlight on Holehe, a tool that might have slipped under your radar but packs a punch in the OSINT arena.

Easy Installation

Getting Holehe up and running is a breeze. Just follow these simple steps bewlo. I quickly hopped on my Kali test machine and installed it:

git clone https://github.com/megadose/holehe.git

cd holehe/

sudo python3 setup.py installI’d recommend installing it with Docker, but since I reinstall my demo Kali box every few weeks, it doesn’t matter that I globally install a bunch of Python libraries.

Running Holehe

Running Holehe is super simple:

holehe --no-clear --only-used [email protected]I used the --no-clear flag so I can just copy my executed command; otherwise, it clears the terminal. I use the --only-used flag because I only care about pages that my target uses.

Let’s check out the result:

*********************

[email protected]

*********************

[+] wordpress.com

[+] Email used, [-] Email not used, [x] Rate limit, [!] Error

121 websites checked in 10.16 seconds

Twitter : @palenath

Github : https://github.com/megadose/holehe

For BTC Donations : 1FHDM49QfZX6pJmhjLE5tB2K6CaTLMZpXZ

100%|█████████████████████████████████████████| 121/121 [00:10<00:00, 11.96it/s]Sweet! We have a hit! Holehe checked 121 different pages in 10.16 seconds.

Debugging Holehe

So running the tool without the --only-used flag is, in my opinion, important for debugging. It seems that a lot of pages rate-limited me or are throwing errors. So there is a lot of potential of missed accounts here.

*********************

[email protected]

*********************

[x] about.me

[-] adobe.com

[-] amazon.com

[x] amocrm.com

[-] any.do

[-] archive.org

[x] forum.blitzortung.org

[x] bluegrassrivals.com

[-] bodybuilding.com

[!] buymeacoffee.com

[+] Email used, [-] Email not used, [x] Rate limit, [!] Error

121 websites checked in 10.22 secondsthe list is very long so I removed a lot of the output

Personally, I think that since a lot of that code is 2 years old, many of these pages have become a lot smarter about detecting bots, which is why the rate limit gets reached.

Holehe Deep Dive

Let us look at how Holehe works by analyzing one of the modules. I picked Codepen.

Please check out the code. I added some comments:

from holehe.core import *

from holehe.localuseragent import *

async def codepen(email, client, out):

name = "codepen"

domain = "codepen.io"

method = "register"

frequent_rate_limit = False

# adding necessary headers for codepen signup request

headers = {

"User-Agent": random.choice(ua["browsers"]["chrome"]),

"Accept": "*/*",

"Accept-Language": "en,en-US;q=0.5",

"Referer": "https://codepen.io/accounts/signup/user/free",

"Content-Type": "application/x-www-form-urlencoded; charset=UTF-8",

"X-Requested-With": "XMLHttpRequest",

"Origin": "https://codepen.io",

"DNT": "1",

"Connection": "keep-alive",

"TE": "Trailers",

}

# getting the CSRF token for later use, adding it to the headers

try:

req = await client.get(

"https://codepen.io/accounts/signup/user/free", headers=headers

)

soup = BeautifulSoup(req.content, features="html.parser")

token = soup.find(attrs={"name": "csrf-token"}).get("content")

headers["X-CSRF-Token"] = token

except Exception:

out.append(

{

"name": name,

"domain": domain,

"method": method,

"frequent_rate_limit": frequent_rate_limit,

"rateLimit": True,

"exists": False,

"emailrecovery": None,

"phoneNumber": None,

"others": None,

}

)

return None

# here is where the supplied email address is added

data = {"attribute": "email", "value": email, "context": "user"}

# post request that checks if account exists

response = await client.post(

"https://codepen.io/accounts/duplicate_check", headers=headers, data=data

)

# checks response for specified text. If email is taken we have a hit

if "That Email is already taken." in response.text:

out.append(

{

"name": name,

"domain": domain,

"method": method,

"frequent_rate_limit": frequent_rate_limit,

"rateLimit": False,

"exists": True,

"emailrecovery": None,

"phoneNumber": None,

"others": None,

}

)

else:

# we land here if email is not taken, meaning no account on codepen

out.append(

{

"name": name,

"domain": domain,

"method": method,

"frequent_rate_limit": frequent_rate_limit,

"rateLimit": False,

"exists": False,

"emailrecovery": None,

"phoneNumber": None,

"others": None,

}

)The developer of Holehe had to do a lot of digging. They had to manually analyze the signup flow of a bunch of different pages to build these modules. You can easily do this by using a tool like OWASP ZAP or Burp Suite or Postman. It is a lot of manual work, though.

The issue is that flows like this often change. If Codepen changed the response message or format, this code would fail. That’s the general problem with building web scrapers. If a header name or HTML element is changed, the code fails. This sort of code is very hard to maintain. I am guessing it is why this project has been more or less abandoned.

Nonetheless, you could easily fix the modules, and this would work perfectly again. I suggest using Python Playwright for the requests; using a headless browser is harder to detect and will probably lead to higher success.

Sherlock

Let me introduce you to another tool called Sherlock, which I’ve frequently used in investigations.

Installation

I’m just going to install it on my test system. But there’s also a Docker image I’d recommend for a production server:

git clone https://github.com/sherlock-project/sherlock.git

cd sherlock

python3 -m pip install -r requirements.txtSherlock offers a plethora of options, and I recommend studying them for your specific case. It’s best used with usernames, but today, we’ll give it a try with an email address.

Running Sherlock

Simply run:

python3 sherlock [email protected]Sherlock takes a little bit longer than holehe, so you need a little more patience. Here are the results of my search:

[*] Checking username [email protected] on:

[+] Archive.org: https://archive.org/details/@[email protected]

[+] BitCoinForum: https://bitcoinforum.com/profile/[email protected]

[+] CGTrader: https://www.cgtrader.com/[email protected]

[+] Chaos: https://chaos.social/@[email protected]

[+] Cults3D: https://cults3d.com/en/users/[email protected]/creations

[+] Euw: https://euw.op.gg/summoner/[email protected]

[+] Mapify: https://mapify.travel/[email protected]

[+] NationStates Nation: https://nationstates.net/[email protected]

[+] NationStates Region: https://nationstates.net/[email protected]

[+] Oracle Community: https://community.oracle.com/people/[email protected]

[+] Polymart: https://polymart.org/user/[email protected]

[+] Slides: https://slides.com/[email protected]

[+] Trello: https://trello.com/[email protected]

[+] chaos.social: https://chaos.social/@[email protected]

[+] mastodon.cloud: https://mastodon.cloud/@[email protected]

[+] mastodon.social: https://mastodon.social/@[email protected]

[+] mastodon.xyz: https://mastodon.xyz/@[email protected]

[+] mstdn.io: https://mstdn.io/@[email protected]

[+] social.tchncs.de: https://social.tchncs.de/@[email protected]

[*] Search completed with 19 resultsAt first glance, there are a lot more results. However, upon review, only 2 were valid, which is still good considering this tool is normally not used for email addresses.

Sherlock Deep Dive

Sherlock has a really nice JSON file that can easily be edited to add or remove old tools. You can check it out sherlock/resources/data.json.

This makes it a lot easier to maintain. I use the same approach for my OSINT tools here on this website.

This is what one of Sherlock’s modules looks like:

"Docker Hub": {

"errorType": "status_code",

"url": "https://hub.docker.com/u/{}/",

"urlMain": "https://hub.docker.com/",

"urlProbe": "https://hub.docker.com/v2/users/{}/",

"username_claimed": "blue"

},There’s not much more to it; they basically use these “templates” and test the responses they get from requests sent to the respective endpoints. Sometimes by matching text, sometimes by using regex.

Spiderfoot

Now we get to the star of the show: Spiderfoot. I love Spiderfoot. I use it on every engagement, usually only in Passive mode with just about all the API Keys that are humanly affordable. The only thing I do not like about it is that it actually finds so much information that it takes a while to sort through the data and filter out false positives or irrelevant data. Playing around with the settings can drastically reduce this.

Installation

Spiderfoot is absolutely free and even without API Keys for other services, it finds a mind-boggling amount of information. It has saved me countless hours on people investigations, you would not believe it.

You can find the installation instructions on the Spiderfoot GitHub page. There are also Docker deployments available for this. In my case, it is already pre-installed on Kali, so I just need to start it.

spiderfoot -l 0.0.0.0:8081This starts the Spiderfoot webserver, and I can reach it from my network on the IP of my Kali machine on port 8081. In my case, that would be http://10.102.0.11:8081/.

After you navigate to the address, you will be greeted with this screen:

I run a headless Kali, so I just SSH into my Kali “server.” If you are following along, you can simply run

spiderfoot -l 127.0.0.1:8081and only expose it on localhost, then browse there on your Kali Desktop.

Running Spiderfoot

Spiderfoot is absolutely killer when you add as many of the API Keys as possible. A lot of them are for free. Just export the Spiderfoot.cfg from the settings page, fill in the keys, then import them.

Important: before you begin, check the settings. Things like port scans are enabled by default. Your target will know you are scanning them. By default, this is not a passive recon tool like the others. You can disable them OR just run Spiderfoot in

Passivemode when you configure a new scan.

My initial scan did not find many infos, that’s good. The email address I supplied should be absolutely clean. I did want to show you some results, so I started another search with my karlcom.de domain, which is my consulting company.

By the time the scan was done, it had found over 2000 results linking Karlcom to Exploit and a bunch of other businesses and websites I run. It found my clear name and a whole bunch of other interesting information about what I do on the internet and how things are connected. All that just by putting my domain in without ANY API keys. That is absolutely nuts.

You get a nice little correlation report at the end (you do not really need to see all the things in detail here):

Once you start your own Spiderfoot journey, you will have more than enough time to study the results there and see them as big as you like.

Another thing I did not show you was the “Browse” option. While a scan is running, you can view the results in the web front end and already check for possible other attack vectors or information.

Summary

So, what did we accomplish on our OSINT adventure? We took a spin through some seriously cool tools! From the nifty Holehe to the trusty Sherlock and the mighty Spiderfoot, each tool brings its own flair to the table. Whether you’re sniffing out secrets or just poking around online, these tools have your back. With their easy setups and powerful features, Holehe, Sherlock, and Spiderfoot are like the trusty sidekicks you never knew you needed in the digital world.

Keep exploring, stay curious, and until next time!

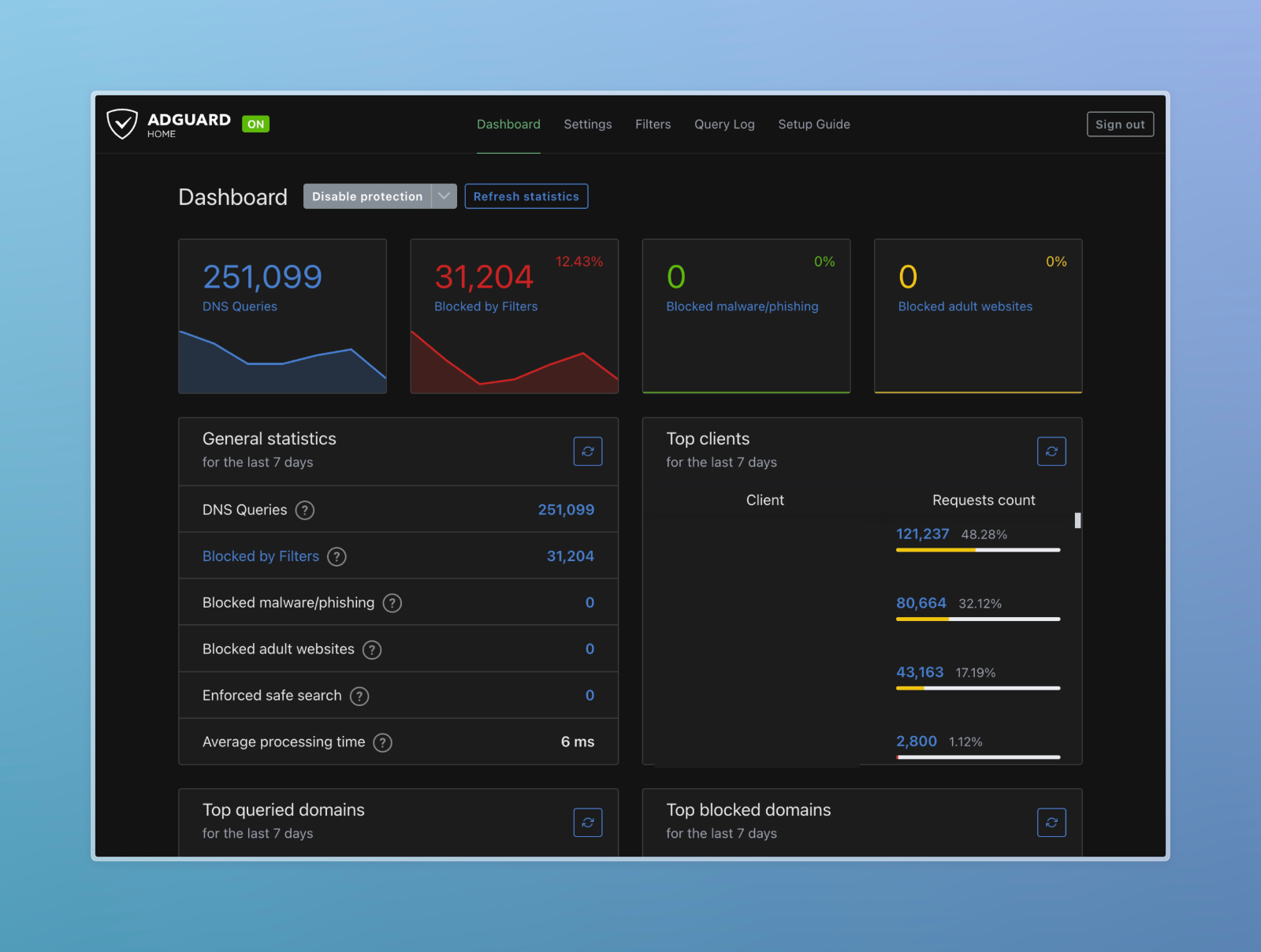

I did not show you my DNS Server IP by mistake. Scroll to the end to find out why.

I did not show you my DNS Server IP by mistake. Scroll to the end to find out why.